Compare commits

19 Commits

feat/unifa

...

v3.5.3

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

400bb72217 | ||

|

|

a0a12d5eca | ||

|

|

a34f376da0 | ||

|

|

2b29706615 | ||

|

|

f6d3cf33f0 | ||

|

|

0eb042425c | ||

|

|

35c0b6d539 | ||

|

|

13c4ac83d8 | ||

|

|

6ce397b811 | ||

|

|

9bf54f5f78 | ||

|

|

c87ec1ad0f | ||

|

|

9e56a86963 | ||

|

|

426bd71505 | ||

|

|

ede8b27091 | ||

|

|

02c77ce5db | ||

|

|

d70d6a254f | ||

|

|

7d37633b1a | ||

|

|

bc413df4a8 | ||

|

|

8db0577991 |

24

.github/workflows/ci.yml

vendored

@@ -1,4 +1,4 @@

|

||||

name: Build

|

||||

name: CI

|

||||

|

||||

on:

|

||||

push:

|

||||

@@ -17,10 +17,10 @@ jobs:

|

||||

runs-on: ubuntu-latest

|

||||

timeout-minutes: 5

|

||||

steps:

|

||||

- uses: actions/checkout@v4

|

||||

- uses: actions/setup-python@v5

|

||||

- uses: actions/checkout@v5

|

||||

- uses: actions/setup-python@v6

|

||||

with:

|

||||

python-version: "3.10"

|

||||

python-version: "3.11"

|

||||

- uses: pre-commit/action@v3.0.1

|

||||

|

||||

test:

|

||||

@@ -35,8 +35,14 @@ jobs:

|

||||

# Full Python range on Linux (fastest runner)

|

||||

- os: ubuntu-latest

|

||||

python-version: "3.10"

|

||||

- os: ubuntu-latest

|

||||

python-version: "3.11"

|

||||

- os: ubuntu-latest

|

||||

python-version: "3.12"

|

||||

- os: ubuntu-latest

|

||||

python-version: "3.13"

|

||||

- os: ubuntu-latest

|

||||

python-version: "3.14"

|

||||

- os: macos-latest

|

||||

python-version: "3.13"

|

||||

- os: windows-latest

|

||||

@@ -44,10 +50,10 @@ jobs:

|

||||

|

||||

steps:

|

||||

- name: Checkout code

|

||||

uses: actions/checkout@v4

|

||||

uses: actions/checkout@v5

|

||||

|

||||

- name: Set up Python ${{ matrix.python-version }}

|

||||

uses: actions/setup-python@v5

|

||||

uses: actions/setup-python@v6

|

||||

with:

|

||||

python-version: ${{ matrix.python-version }}

|

||||

cache: "pip"

|

||||

@@ -55,7 +61,7 @@ jobs:

|

||||

- name: Install dependencies

|

||||

run: |

|

||||

python -m pip install --upgrade pip

|

||||

python -m pip install .[dev]

|

||||

python -m pip install ".[cpu,dev]"

|

||||

|

||||

- name: Check ONNX Runtime providers

|

||||

run: |

|

||||

@@ -74,10 +80,10 @@ jobs:

|

||||

|

||||

steps:

|

||||

- name: Checkout code

|

||||

uses: actions/checkout@v4

|

||||

uses: actions/checkout@v5

|

||||

|

||||

- name: Set up Python

|

||||

uses: actions/setup-python@v5

|

||||

uses: actions/setup-python@v6

|

||||

with:

|

||||

python-version: "3.11"

|

||||

cache: "pip"

|

||||

|

||||

10

.github/workflows/docs.yml

vendored

@@ -1,8 +1,6 @@

|

||||

name: Deploy docs

|

||||

name: Deploy Documentation

|

||||

|

||||

on:

|

||||

push:

|

||||

branches: [main]

|

||||

workflow_dispatch:

|

||||

|

||||

permissions:

|

||||

@@ -12,11 +10,11 @@ jobs:

|

||||

deploy:

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- uses: actions/checkout@v4

|

||||

- uses: actions/checkout@v5

|

||||

with:

|

||||

fetch-depth: 0 # Fetch full history for git-committers and git-revision-date plugins

|

||||

fetch-depth: 0

|

||||

|

||||

- uses: actions/setup-python@v5

|

||||

- uses: actions/setup-python@v6

|

||||

with:

|

||||

python-version: "3.11"

|

||||

|

||||

|

||||

221

.github/workflows/pipeline.yml

vendored

Normal file

@@ -0,0 +1,221 @@

|

||||

name: Release Pipeline

|

||||

|

||||

on:

|

||||

workflow_dispatch:

|

||||

inputs:

|

||||

version:

|

||||

description: 'Version (e.g. 3.6.0, 3.6.0b1, 3.6.0rc1)'

|

||||

required: true

|

||||

|

||||

concurrency:

|

||||

group: pipeline

|

||||

cancel-in-progress: false

|

||||

|

||||

jobs:

|

||||

validate:

|

||||

runs-on: ubuntu-latest

|

||||

timeout-minutes: 5

|

||||

outputs:

|

||||

is_prerelease: ${{ steps.prerelease.outputs.is_prerelease }}

|

||||

|

||||

steps:

|

||||

- name: Checkout code

|

||||

uses: actions/checkout@v5

|

||||

|

||||

- name: Set up Python

|

||||

uses: actions/setup-python@v6

|

||||

with:

|

||||

python-version: "3.11"

|

||||

|

||||

- name: Validate version (PEP 440)

|

||||

run: |

|

||||

python - <<'EOF'

|

||||

import re, sys

|

||||

v = "${{ inputs.version }}"

|

||||

if not re.fullmatch(r'\d+\.\d+\.\d+((a|b|rc)\d+|\.dev\d+)?', v):

|

||||

print(f"Invalid version: {v}")

|

||||

print("Expected forms: 3.6.0, 3.6.0a1, 3.6.0b1, 3.6.0rc1, 3.6.0.dev1")

|

||||

sys.exit(1)

|

||||

EOF

|

||||

|

||||

- name: Check tag does not exist

|

||||

run: |

|

||||

if git rev-parse "v${{ inputs.version }}" >/dev/null 2>&1; then

|

||||

echo "Tag v${{ inputs.version }} already exists."

|

||||

exit 1

|

||||

fi

|

||||

|

||||

- name: Detect pre-release

|

||||

id: prerelease

|

||||

run: |

|

||||

if [[ "${{ inputs.version }}" =~ (a|b|rc|\.dev)[0-9]+ ]]; then

|

||||

echo "is_prerelease=true" >> $GITHUB_OUTPUT

|

||||

else

|

||||

echo "is_prerelease=false" >> $GITHUB_OUTPUT

|

||||

fi

|

||||

|

||||

test:

|

||||

runs-on: ubuntu-latest

|

||||

timeout-minutes: 15

|

||||

needs: validate

|

||||

|

||||

strategy:

|

||||

fail-fast: false

|

||||

matrix:

|

||||

python-version: ["3.10", "3.11", "3.12", "3.13", "3.14"]

|

||||

|

||||

steps:

|

||||

- name: Checkout code

|

||||

uses: actions/checkout@v5

|

||||

|

||||

- name: Set up Python ${{ matrix.python-version }}

|

||||

uses: actions/setup-python@v6

|

||||

with:

|

||||

python-version: ${{ matrix.python-version }}

|

||||

cache: 'pip'

|

||||

|

||||

- name: Install dependencies

|

||||

run: |

|

||||

python -m pip install --upgrade pip

|

||||

python -m pip install ".[cpu,dev]"

|

||||

|

||||

- name: Run tests

|

||||

run: pytest -v --tb=short

|

||||

|

||||

release:

|

||||

runs-on: ubuntu-latest

|

||||

timeout-minutes: 5

|

||||

needs: test

|

||||

permissions:

|

||||

contents: write

|

||||

|

||||

steps:

|

||||

- name: Checkout code

|

||||

uses: actions/checkout@v5

|

||||

with:

|

||||

fetch-depth: 0

|

||||

token: ${{ secrets.RELEASE_TOKEN }}

|

||||

|

||||

- name: Set up Python

|

||||

uses: actions/setup-python@v6

|

||||

with:

|

||||

python-version: "3.11"

|

||||

|

||||

- name: Update pyproject.toml

|

||||

run: |

|

||||

python - <<'EOF'

|

||||

import re, pathlib

|

||||

p = pathlib.Path('pyproject.toml')

|

||||

text = p.read_text()

|

||||

new = re.sub(r'^version\s*=\s*".*"', f'version = "${{ inputs.version }}"', text, count=1, flags=re.M)

|

||||

if new == text:

|

||||

raise SystemExit("Failed to update version in pyproject.toml")

|

||||

p.write_text(new)

|

||||

EOF

|

||||

|

||||

- name: Update uniface/__init__.py

|

||||

run: |

|

||||

python - <<'EOF'

|

||||

import re, pathlib

|

||||

p = pathlib.Path('uniface/__init__.py')

|

||||

text = p.read_text()

|

||||

new = re.sub(r"^__version__\s*=\s*'.*'", f"__version__ = '${{ inputs.version }}'", text, count=1, flags=re.M)

|

||||

if new == text:

|

||||

raise SystemExit("Failed to update __version__ in uniface/__init__.py")

|

||||

p.write_text(new)

|

||||

EOF

|

||||

|

||||

- name: Commit, tag, push

|

||||

run: |

|

||||

git config user.name "github-actions[bot]"

|

||||

git config user.email "41898282+github-actions[bot]@users.noreply.github.com"

|

||||

git add pyproject.toml uniface/__init__.py

|

||||

git commit -m "chore: Release v${{ inputs.version }}"

|

||||

git tag "v${{ inputs.version }}"

|

||||

git push origin HEAD:${{ github.ref_name }}

|

||||

git push origin "v${{ inputs.version }}"

|

||||

|

||||

publish:

|

||||

runs-on: ubuntu-latest

|

||||

timeout-minutes: 10

|

||||

needs: [validate, release]

|

||||

permissions:

|

||||

contents: write

|

||||

id-token: write

|

||||

environment:

|

||||

name: pypi

|

||||

url: https://pypi.org/project/uniface/

|

||||

|

||||

steps:

|

||||

- name: Checkout tag

|

||||

uses: actions/checkout@v5

|

||||

with:

|

||||

ref: v${{ inputs.version }}

|

||||

|

||||

- name: Set up Python

|

||||

uses: actions/setup-python@v6

|

||||

with:

|

||||

python-version: "3.11"

|

||||

cache: 'pip'

|

||||

|

||||

- name: Install build tools

|

||||

run: |

|

||||

python -m pip install --upgrade pip

|

||||

python -m pip install build twine

|

||||

|

||||

- name: Build package

|

||||

run: python -m build

|

||||

|

||||

- name: Check package

|

||||

run: twine check dist/*

|

||||

|

||||

- name: Publish to PyPI

|

||||

env:

|

||||

TWINE_USERNAME: __token__

|

||||

TWINE_PASSWORD: ${{ secrets.PYPI_API_TOKEN }}

|

||||

run: twine upload dist/*

|

||||

|

||||

- name: Create GitHub Release

|

||||

uses: softprops/action-gh-release@v1

|

||||

with:

|

||||

tag_name: v${{ inputs.version }}

|

||||

files: dist/*

|

||||

generate_release_notes: true

|

||||

prerelease: ${{ needs.validate.outputs.is_prerelease }}

|

||||

|

||||

docs:

|

||||

runs-on: ubuntu-latest

|

||||

timeout-minutes: 10

|

||||

needs: [validate, publish]

|

||||

if: needs.validate.outputs.is_prerelease == 'false'

|

||||

permissions:

|

||||

contents: write

|

||||

|

||||

steps:

|

||||

- name: Checkout tag

|

||||

uses: actions/checkout@v5

|

||||

with:

|

||||

ref: v${{ inputs.version }}

|

||||

fetch-depth: 0

|

||||

|

||||

- name: Set up Python

|

||||

uses: actions/setup-python@v6

|

||||

with:

|

||||

python-version: "3.11"

|

||||

|

||||

- name: Install dependencies

|

||||

run: |

|

||||

python -m pip install --upgrade pip

|

||||

pip install mkdocs-material pymdown-extensions mkdocs-git-committers-plugin-2 mkdocs-git-revision-date-localized-plugin

|

||||

|

||||

- name: Build docs

|

||||

env:

|

||||

MKDOCS_GIT_COMMITTERS_APIKEY: ${{ secrets.MKDOCS_GIT_COMMITTERS_APIKEY }}

|

||||

run: mkdocs build --strict

|

||||

|

||||

- name: Deploy to GitHub Pages

|

||||

uses: peaceiris/actions-gh-pages@v4

|

||||

with:

|

||||

github_token: ${{ secrets.GITHUB_TOKEN }}

|

||||

publish_dir: ./site

|

||||

destination_dir: docs

|

||||

119

.github/workflows/publish.yml

vendored

@@ -1,119 +0,0 @@

|

||||

name: Publish to PyPI

|

||||

|

||||

on:

|

||||

push:

|

||||

tags:

|

||||

- "v*.*.*" # Trigger only on version tags like v0.1.9

|

||||

|

||||

concurrency:

|

||||

group: ${{ github.workflow }}-${{ github.ref }}

|

||||

cancel-in-progress: true

|

||||

|

||||

jobs:

|

||||

validate:

|

||||

runs-on: ubuntu-latest

|

||||

timeout-minutes: 5

|

||||

outputs:

|

||||

version: ${{ steps.get_version.outputs.version }}

|

||||

tag_version: ${{ steps.get_version.outputs.tag_version }}

|

||||

|

||||

steps:

|

||||

- name: Checkout code

|

||||

uses: actions/checkout@v4

|

||||

|

||||

- name: Set up Python

|

||||

uses: actions/setup-python@v5

|

||||

with:

|

||||

python-version: "3.11" # Needs 3.11+ for tomllib

|

||||

|

||||

- name: Get version from tag and pyproject.toml

|

||||

id: get_version

|

||||

run: |

|

||||

TAG_VERSION=${GITHUB_REF#refs/tags/v}

|

||||

echo "tag_version=$TAG_VERSION" >> $GITHUB_OUTPUT

|

||||

|

||||

PYPROJECT_VERSION=$(python -c "import tomllib; print(tomllib.load(open('pyproject.toml','rb'))['project']['version'])")

|

||||

echo "version=$PYPROJECT_VERSION" >> $GITHUB_OUTPUT

|

||||

|

||||

echo "Tag version: v$TAG_VERSION"

|

||||

echo "pyproject.toml version: $PYPROJECT_VERSION"

|

||||

|

||||

- name: Verify version match

|

||||

run: |

|

||||

if [ "${{ steps.get_version.outputs.tag_version }}" != "${{ steps.get_version.outputs.version }}" ]; then

|

||||

echo "Error: Tag version (${{ steps.get_version.outputs.tag_version }}) does not match pyproject.toml version (${{ steps.get_version.outputs.version }})"

|

||||

exit 1

|

||||

fi

|

||||

echo "Version validation passed: ${{ steps.get_version.outputs.version }}"

|

||||

|

||||

test:

|

||||

runs-on: ubuntu-latest

|

||||

timeout-minutes: 15

|

||||

needs: validate

|

||||

|

||||

strategy:

|

||||

fail-fast: false

|

||||

matrix:

|

||||

python-version: ["3.10", "3.13"]

|

||||

|

||||

steps:

|

||||

- name: Checkout code

|

||||

uses: actions/checkout@v4

|

||||

|

||||

- name: Set up Python ${{ matrix.python-version }}

|

||||

uses: actions/setup-python@v5

|

||||

with:

|

||||

python-version: ${{ matrix.python-version }}

|

||||

cache: 'pip'

|

||||

|

||||

- name: Install dependencies

|

||||

run: |

|

||||

python -m pip install --upgrade pip

|

||||

python -m pip install .[dev]

|

||||

|

||||

- name: Run tests

|

||||

run: pytest -v

|

||||

|

||||

publish:

|

||||

runs-on: ubuntu-latest

|

||||

timeout-minutes: 10

|

||||

needs: [validate, test]

|

||||

permissions:

|

||||

contents: write

|

||||

id-token: write

|

||||

environment:

|

||||

name: pypi

|

||||

url: https://pypi.org/project/uniface/

|

||||

|

||||

steps:

|

||||

- name: Checkout code

|

||||

uses: actions/checkout@v4

|

||||

|

||||

- name: Set up Python

|

||||

uses: actions/setup-python@v5

|

||||

with:

|

||||

python-version: "3.10"

|

||||

cache: 'pip'

|

||||

|

||||

- name: Install build tools

|

||||

run: |

|

||||

python -m pip install --upgrade pip

|

||||

python -m pip install build twine

|

||||

|

||||

- name: Build package

|

||||

run: python -m build

|

||||

|

||||

- name: Check package

|

||||

run: twine check dist/*

|

||||

|

||||

- name: Publish to PyPI

|

||||

env:

|

||||

TWINE_USERNAME: __token__

|

||||

TWINE_PASSWORD: ${{ secrets.PYPI_API_TOKEN }}

|

||||

run: twine upload dist/*

|

||||

|

||||

- name: Create GitHub Release

|

||||

uses: softprops/action-gh-release@v1

|

||||

with:

|

||||

files: dist/*

|

||||

generate_release_notes: true

|

||||

@@ -18,6 +18,13 @@ repos:

|

||||

- id: debug-statements

|

||||

- id: check-ast

|

||||

|

||||

# Strip Jupyter notebook outputs

|

||||

- repo: https://github.com/kynan/nbstripout

|

||||

rev: 0.9.1

|

||||

hooks:

|

||||

- id: nbstripout

|

||||

files: ^examples/

|

||||

|

||||

# Ruff - Fast Python linter and formatter

|

||||

- repo: https://github.com/astral-sh/ruff-pre-commit

|

||||

rev: v0.14.10

|

||||

|

||||

6

AGENTS.md

Normal file

@@ -0,0 +1,6 @@

|

||||

<!-- Cursor agent instructions — shared with CLAUDE.md -->

|

||||

<!-- See CLAUDE.md for full project instructions for AI coding agents. -->

|

||||

|

||||

# AGENTS.md

|

||||

|

||||

Please read and follow all instructions in [CLAUDE.md](./CLAUDE.md).

|

||||

81

CLAUDE.md

Normal file

@@ -0,0 +1,81 @@

|

||||

# CLAUDE.md

|

||||

|

||||

Project instructions for AI coding agents.

|

||||

|

||||

## Project Overview

|

||||

|

||||

UniFace is a Python library for face detection, recognition, tracking, landmark analysis, face parsing, gaze estimation, age/gender detection. It uses ONNX Runtime for inference.

|

||||

|

||||

## Code Style

|

||||

|

||||

- Python 3.10+ with type hints

|

||||

- Line length: 120

|

||||

- Single quotes for strings, double quotes for docstrings

|

||||

- Google-style docstrings

|

||||

- Formatter/linter: Ruff (config in `pyproject.toml`)

|

||||

- Run `ruff format .` and `ruff check . --fix` before committing

|

||||

|

||||

## Commit Messages

|

||||

|

||||

Follow [Conventional Commits](https://www.conventionalcommits.org/) with a **capitalized** description:

|

||||

|

||||

```

|

||||

<type>: <Capitalized short description>

|

||||

```

|

||||

|

||||

Types: `feat`, `fix`, `docs`, `style`, `refactor`, `perf`, `test`, `build`, `ci`, `chore`

|

||||

|

||||

Examples:

|

||||

- `feat: Add gaze estimation model`

|

||||

- `fix: Correct bounding box scaling for non-square images`

|

||||

- `ci: Add nbstripout pre-commit hook`

|

||||

- `docs: Update installation instructions`

|

||||

- `refactor: Unify attribute/detector base classes`

|

||||

|

||||

## Testing

|

||||

|

||||

```bash

|

||||

pytest -v --tb=short

|

||||

```

|

||||

|

||||

Tests live in `tests/`. Run the full suite before submitting changes.

|

||||

|

||||

## Pre-commit

|

||||

|

||||

Pre-commit hooks handle formatting, linting, security checks, and notebook output stripping. Always run:

|

||||

|

||||

```bash

|

||||

pre-commit install

|

||||

pre-commit run --all-files

|

||||

```

|

||||

|

||||

## Project Structure

|

||||

|

||||

```

|

||||

uniface/ # Main package

|

||||

detection/ # Face detection models (SCRFD, RetinaFace, YOLOv5, YOLOv8)

|

||||

recognition/ # Face recognition/verification (AdaFace, ArcFace, EdgeFace, MobileFace, SphereFace)

|

||||

landmark/ # Facial landmark models

|

||||

tracking/ # Object tracking (ByteTrack)

|

||||

parsing/ # Face parsing/segmentation (BiSeNet, XSeg)

|

||||

gaze/ # Gaze estimation

|

||||

headpose/ # Head pose estimation

|

||||

attribute/ # Age, gender, emotion detection

|

||||

spoofing/ # Anti-spoofing (MiniFASNet)

|

||||

privacy/ # Face anonymization

|

||||

stores/ # Vector stores (FAISS)

|

||||

constants.py # Model weight URLs and checksums

|

||||

model_store.py # Model download/cache management

|

||||

analyzer.py # High-level FaceAnalyzer API

|

||||

types.py # Shared type definitions

|

||||

tests/ # Unit tests

|

||||

examples/ # Jupyter notebooks (outputs are auto-stripped)

|

||||

docs/ # MkDocs documentation

|

||||

```

|

||||

|

||||

## Key Conventions

|

||||

|

||||

- New models: add class in submodule, register weights in `constants.py`, export in `__init__.py`

|

||||

- Dependencies: managed in `pyproject.toml`

|

||||

- All ONNX models are downloaded on demand with SHA256 verification

|

||||

- Do not commit notebook outputs; `nbstripout` pre-commit hook handles this

|

||||

@@ -184,6 +184,48 @@ Example notebooks demonstrating library usage:

|

||||

| Face Parsing | [06_face_parsing.ipynb](examples/06_face_parsing.ipynb) |

|

||||

| Face Anonymization | [07_face_anonymization.ipynb](examples/07_face_anonymization.ipynb) |

|

||||

| Gaze Estimation | [08_gaze_estimation.ipynb](examples/08_gaze_estimation.ipynb) |

|

||||

| Face Segmentation | [09_face_segmentation.ipynb](examples/09_face_segmentation.ipynb) |

|

||||

| Face Vector Store | [10_face_vector_store.ipynb](examples/10_face_vector_store.ipynb) |

|

||||

| Head Pose Estimation | [11_head_pose_estimation.ipynb](examples/11_head_pose_estimation.ipynb) |

|

||||

|

||||

## Release Process

|

||||

|

||||

Releases are fully automated via GitHub Actions. Only maintainers with branch-protection bypass privileges on `main` can trigger a release.

|

||||

|

||||

### Cutting a release

|

||||

|

||||

1. Go to **Actions → Release Pipeline → Run workflow** on GitHub.

|

||||

2. Enter the version following [PEP 440](https://peps.python.org/pep-0440/):

|

||||

- Stable: `0.7.0`, `1.0.0`

|

||||

- Pre-release: `0.7.0rc1`, `0.7.0b1`, `0.7.0a1`, `0.7.0.dev1`

|

||||

3. Click **Run workflow**.

|

||||

|

||||

### What happens automatically

|

||||

|

||||

The `Release Pipeline` workflow runs all stages in sequence:

|

||||

|

||||

1. **Validate** — checks the version string against PEP 440 and confirms the tag does not already exist.

|

||||

2. **Test** — runs the test suite on Python 3.10–3.14.

|

||||

3. **Release** — updates `pyproject.toml` and `uniface/__init__.py`, commits `chore: Release vX.Y.Z` to `main`, creates and pushes tag `vX.Y.Z`.

|

||||

4. **Publish** — builds the package, uploads to PyPI, and creates a GitHub Release (flagged as pre-release for `a`/`b`/`rc`/`.dev` versions).

|

||||

5. **Deploy docs** — runs only for **stable** versions. Pre-releases do not update the live documentation site.

|

||||

|

||||

### Verifying a release

|

||||

|

||||

- PyPI: <https://pypi.org/project/uniface/>

|

||||

- GitHub Releases: <https://github.com/yakhyo/uniface/releases>

|

||||

- Docs (stable only): <https://yakhyo.github.io/uniface/>

|

||||

|

||||

### Installing a pre-release

|

||||

|

||||

End users can opt in to pre-releases with the `--pre` flag:

|

||||

|

||||

```bash

|

||||

pip install uniface --pre # latest pre-release

|

||||

pip install uniface==0.7.0rc1 # specific pre-release

|

||||

```

|

||||

|

||||

Without `--pre`, `pip install uniface` always resolves to the latest stable version.

|

||||

|

||||

## Questions?

|

||||

|

||||

|

||||

166

README.md

@@ -1,4 +1,4 @@

|

||||

<h1 align="center">UniFace: All-in-One Face Analysis Library</h1>

|

||||

<h1 align="center">UniFace: A Unified Face Analysis Library for Python</h1>

|

||||

|

||||

<div align="center">

|

||||

|

||||

@@ -14,53 +14,90 @@

|

||||

</div>

|

||||

|

||||

<div align="center">

|

||||

<img src="https://raw.githubusercontent.com/yakhyo/uniface/main/.github/logos/uniface_rounded_q80.webp" width="90%" alt="UniFace - All-in-One Open-Source Face Analysis Library">

|

||||

<img src="https://raw.githubusercontent.com/yakhyo/uniface/main/.github/logos/uniface_rounded_q80.webp" width="90%" alt="UniFace - A Unified Face Analysis Library for Python">

|

||||

</div>

|

||||

|

||||

---

|

||||

|

||||

**UniFace** is a lightweight, production-ready face analysis library built on ONNX Runtime. It provides high-performance face detection, recognition, landmark detection, face parsing, gaze estimation, and attribute analysis with hardware acceleration support across platforms.

|

||||

**UniFace** is a lightweight, production-ready Python library for face detection, recognition, tracking, landmark analysis, face parsing, gaze estimation, and face attributes.

|

||||

|

||||

---

|

||||

|

||||

## Features

|

||||

|

||||

- **Face Detection** — RetinaFace, SCRFD, YOLOv5-Face, and YOLOv8-Face with 5-point landmarks

|

||||

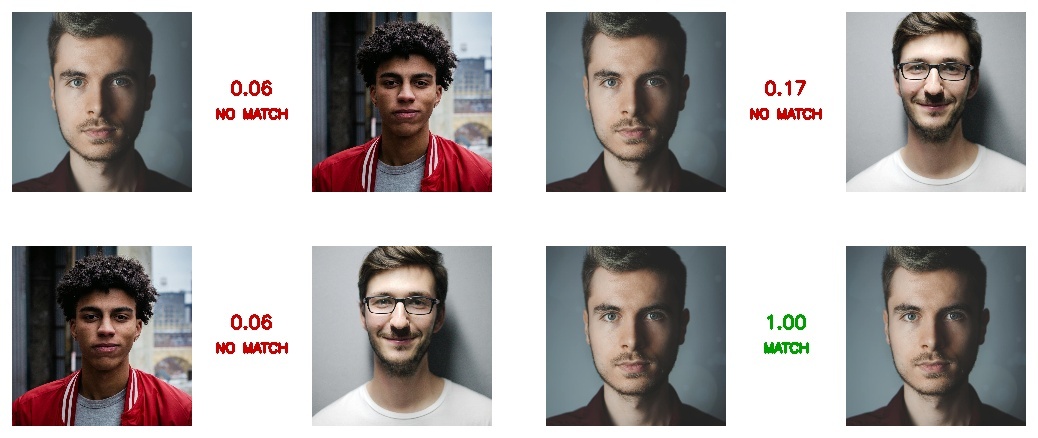

- **Face Recognition** — ArcFace, MobileFace, and SphereFace embeddings

|

||||

- **Face Recognition** — AdaFace, ArcFace, EdgeFace, MobileFace, and SphereFace embeddings

|

||||

- **Face Tracking** — Multi-object tracking with [BYTETracker](https://github.com/yakhyo/bytetrack-tracker) for persistent IDs across video frames

|

||||

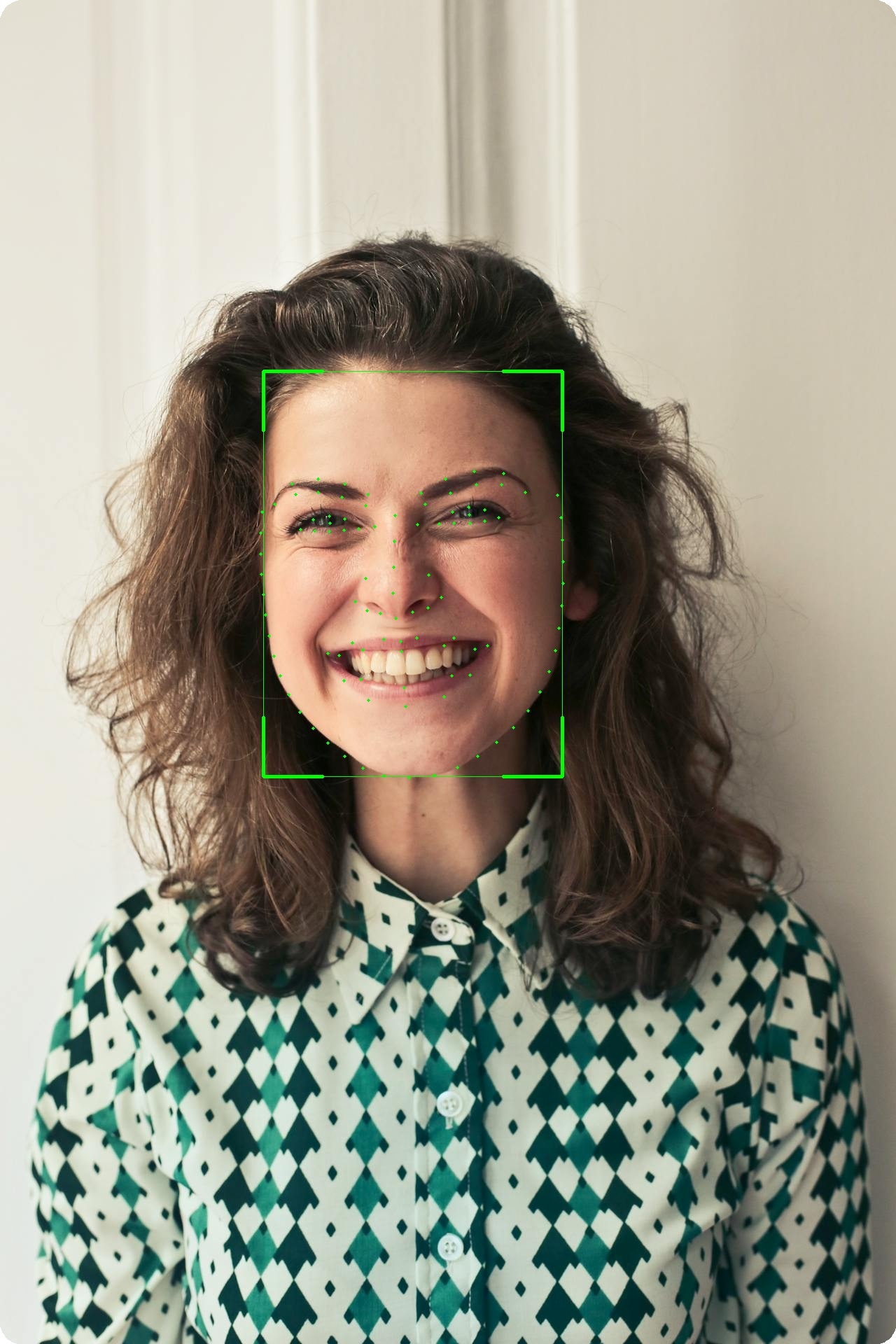

- **Facial Landmarks** — 106-point landmark localization module (separate from 5-point detector landmarks)

|

||||

- **Face Parsing** — BiSeNet semantic segmentation (19 classes), XSeg face masking

|

||||

- **Portrait Matting** — Trimap-free alpha matte with MODNet (background removal, green screen, compositing)

|

||||

- **Gaze Estimation** — Real-time gaze direction with MobileGaze

|

||||

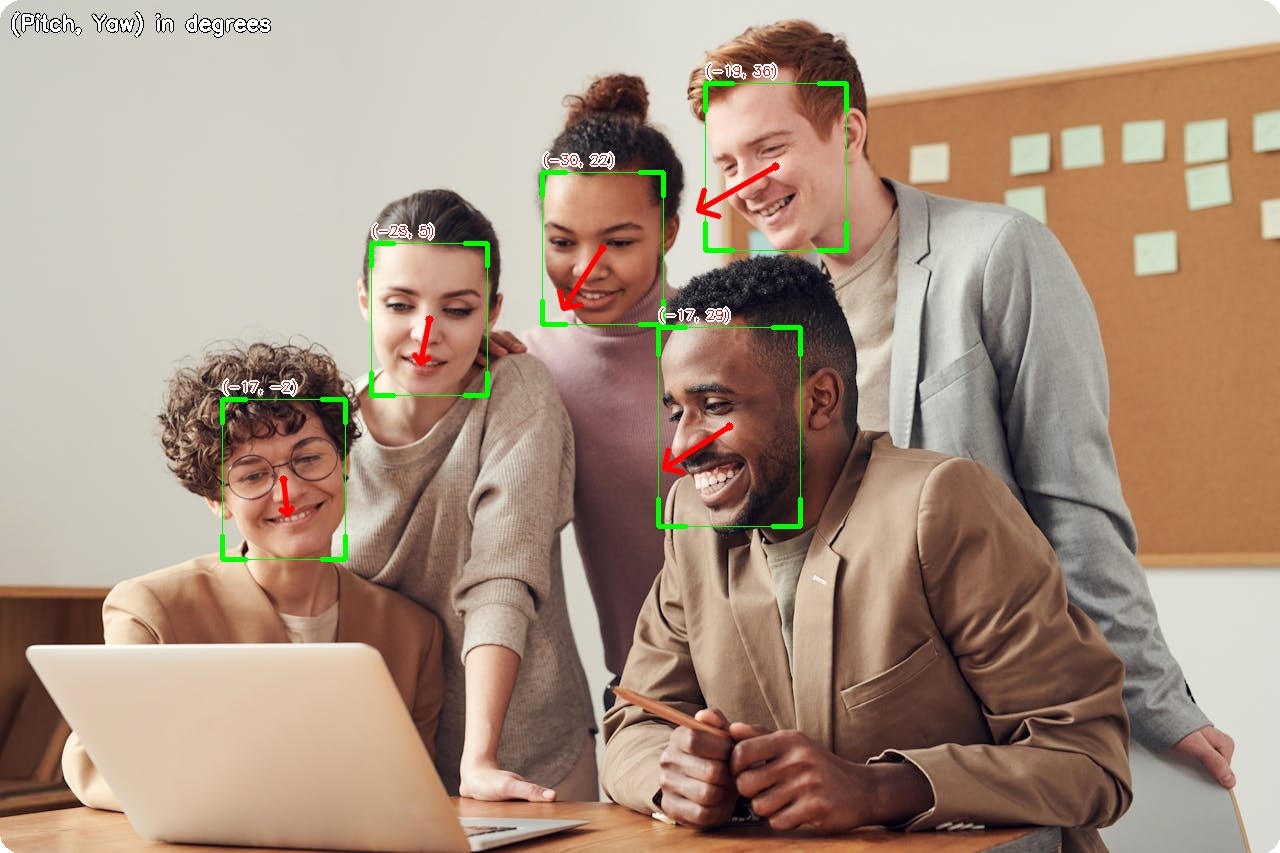

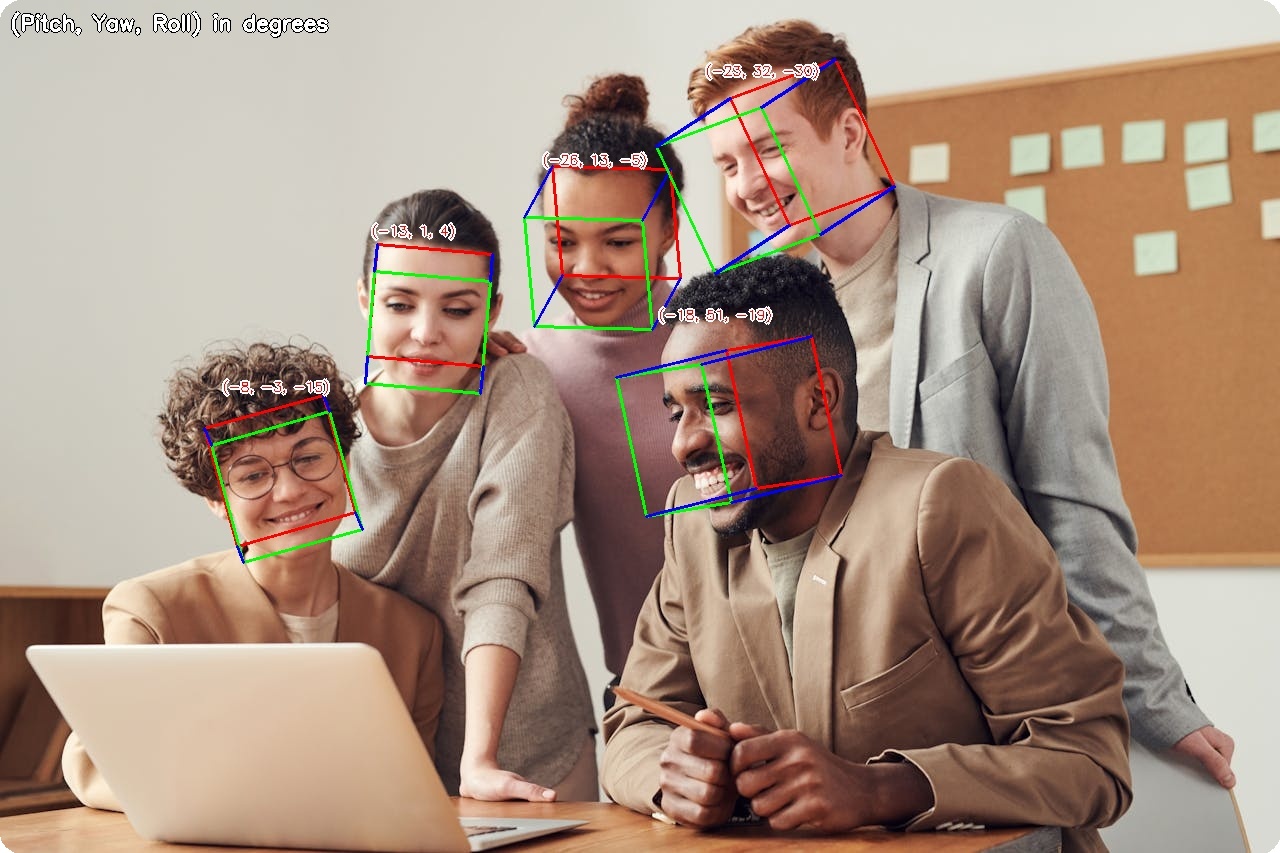

- **Head Pose Estimation** — 3D head orientation (pitch, yaw, roll) with 6D rotation representation

|

||||

- **Attribute Analysis** — Age, gender, race (FairFace), and emotion

|

||||

- **Vector Indexing** — FAISS-backed embedding store for fast multi-identity search

|

||||

- **Vector Store** — FAISS-backed embedding store for fast multi-identity search

|

||||

- **Anti-Spoofing** — Face liveness detection with MiniFASNet

|

||||

- **Face Anonymization** — 5 blur methods for privacy protection

|

||||

- **Hardware Acceleration** — ARM64 (Apple Silicon), CUDA (NVIDIA), CPU

|

||||

|

||||

---

|

||||

|

||||

## Visual Examples

|

||||

|

||||

<table>

|

||||

<tr>

|

||||

<td align="center"><b>Face Detection</b><br><img src="https://raw.githubusercontent.com/yakhyo/uniface/main/assets/demos/detection.jpg" width="100%"></td>

|

||||

<td align="center"><b>Gaze Estimation</b><br><img src="https://raw.githubusercontent.com/yakhyo/uniface/main/assets/demos/gaze.jpg" width="100%"></td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td align="center"><b>Head Pose Estimation</b><br><img src="https://raw.githubusercontent.com/yakhyo/uniface/main/assets/demos/headpose.jpg" width="100%"></td>

|

||||

<td align="center"><b>Age & Gender</b><br><img src="https://raw.githubusercontent.com/yakhyo/uniface/main/assets/demos/age_gender.jpg" width="100%"></td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td align="center" colspan="2"><b>Face Verification</b><br><img src="https://raw.githubusercontent.com/yakhyo/uniface/main/assets/demos/verification.jpg" width="80%"></td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td align="center" colspan="2"><b>106-Point Landmarks</b><br><img src="https://raw.githubusercontent.com/yakhyo/uniface/main/assets/demos/landmarks.jpg" width="36%"></td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td align="center" colspan="2"><b>Face Parsing</b><br><img src="https://raw.githubusercontent.com/yakhyo/uniface/main/assets/demos/parsing.jpg" width="80%"></td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td align="center" colspan="2"><b>Face Segmentation</b><br><img src="https://raw.githubusercontent.com/yakhyo/uniface/main/assets/demos/segmentation.jpg" width="80%"></td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td align="center" colspan="2"><b>Portrait Matting</b><br><img src="https://raw.githubusercontent.com/yakhyo/uniface/main/assets/demos/matting.jpg" width="100%"></td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td align="center" colspan="2"><b>Face Anonymization</b><br><img src="https://raw.githubusercontent.com/yakhyo/uniface/main/assets/demos/anonymization.jpg" width="100%"></td>

|

||||

</tr>

|

||||

</table>

|

||||

|

||||

---

|

||||

|

||||

## Installation

|

||||

|

||||

**Standard installation**

|

||||

**CPU / Apple Silicon**

|

||||

|

||||

```bash

|

||||

pip install uniface

|

||||

pip install uniface[cpu]

|

||||

```

|

||||

|

||||

**GPU support (CUDA)**

|

||||

**GPU support (NVIDIA CUDA)**

|

||||

|

||||

```bash

|

||||

pip install uniface[gpu]

|

||||

```

|

||||

|

||||

> **Why separate extras?** `onnxruntime` and `onnxruntime-gpu` conflict when both are installed — they own the same Python namespace. Installing only the extra you need prevents that conflict entirely.

|

||||

|

||||

**From source (latest version)**

|

||||

|

||||

```bash

|

||||

git clone https://github.com/yakhyo/uniface.git

|

||||

cd uniface && pip install -e .

|

||||

cd uniface && pip install -e ".[cpu]" # or .[gpu] for CUDA

|

||||

```

|

||||

|

||||

**FAISS vector indexing**

|

||||

**FAISS vector store**

|

||||

|

||||

```bash

|

||||

pip install faiss-cpu # or faiss-gpu for CUDA

|

||||

@@ -126,14 +163,10 @@ for face in faces:

|

||||

|

||||

```python

|

||||

import cv2

|

||||

from uniface.analyzer import FaceAnalyzer

|

||||

from uniface.detection import RetinaFace

|

||||

from uniface.recognition import ArcFace

|

||||

from uniface import FaceAnalyzer

|

||||

|

||||

detector = RetinaFace()

|

||||

recognizer = ArcFace()

|

||||

|

||||

analyzer = FaceAnalyzer(detector, recognizer=recognizer)

|

||||

# Zero-config: uses SCRFD (500M) + ArcFace (MobileNet) by default

|

||||

analyzer = FaceAnalyzer()

|

||||

|

||||

image = cv2.imread("photo.jpg")

|

||||

if image is None:

|

||||

@@ -145,19 +178,63 @@ for face in faces:

|

||||

print(face.bbox, face.embedding.shape if face.embedding is not None else None)

|

||||

```

|

||||

|

||||

---

|

||||

|

||||

## Execution Providers (ONNX Runtime)

|

||||

With attributes:

|

||||

|

||||

```python

|

||||

from uniface.detection import RetinaFace

|

||||

from uniface import FaceAnalyzer, AgeGender

|

||||

|

||||

# Force CPU-only inference

|

||||

detector = RetinaFace(providers=["CPUExecutionProvider"])

|

||||

analyzer = FaceAnalyzer(attributes=[AgeGender()])

|

||||

faces = analyzer.analyze(image)

|

||||

|

||||

for face in faces:

|

||||

print(f"{face.sex}, {face.age}y, embedding={face.embedding.shape}")

|

||||

```

|

||||

|

||||

See more in the docs:

|

||||

https://yakhyo.github.io/uniface/concepts/execution-providers/

|

||||

---

|

||||

|

||||

## Example (Portrait Matting)

|

||||

|

||||

```python

|

||||

import cv2

|

||||

import numpy as np

|

||||

from uniface.matting import MODNet

|

||||

|

||||

matting = MODNet()

|

||||

|

||||

image = cv2.imread("portrait.jpg")

|

||||

matte = matting.predict(image) # (H, W) float32 in [0, 1]

|

||||

|

||||

# Transparent PNG

|

||||

rgba = cv2.cvtColor(image, cv2.COLOR_BGR2BGRA)

|

||||

rgba[:, :, 3] = (matte * 255).astype(np.uint8)

|

||||

cv2.imwrite("transparent.png", rgba)

|

||||

|

||||

# Green screen

|

||||

matte_3ch = matte[:, :, np.newaxis]

|

||||

bg = np.full_like(image, (0, 177, 64), dtype=np.uint8)

|

||||

result = (image * matte_3ch + bg * (1 - matte_3ch)).astype(np.uint8)

|

||||

cv2.imwrite("green_screen.jpg", result)

|

||||

```

|

||||

|

||||

---

|

||||

|

||||

## Jupyter Notebooks

|

||||

|

||||

| Example | Colab | Description |

|

||||

|---------|:-----:|-------------|

|

||||

| [01_face_detection.ipynb](examples/01_face_detection.ipynb) | [](https://colab.research.google.com/github/yakhyo/uniface/blob/main/examples/01_face_detection.ipynb) | Face detection and landmarks |

|

||||

| [02_face_alignment.ipynb](examples/02_face_alignment.ipynb) | [](https://colab.research.google.com/github/yakhyo/uniface/blob/main/examples/02_face_alignment.ipynb) | Face alignment for recognition |

|

||||

| [03_face_verification.ipynb](examples/03_face_verification.ipynb) | [](https://colab.research.google.com/github/yakhyo/uniface/blob/main/examples/03_face_verification.ipynb) | Compare faces for identity |

|

||||

| [04_face_search.ipynb](examples/04_face_search.ipynb) | [](https://colab.research.google.com/github/yakhyo/uniface/blob/main/examples/04_face_search.ipynb) | Find a person in group photos |

|

||||

| [05_face_analyzer.ipynb](examples/05_face_analyzer.ipynb) | [](https://colab.research.google.com/github/yakhyo/uniface/blob/main/examples/05_face_analyzer.ipynb) | Unified face analysis |

|

||||

| [06_face_parsing.ipynb](examples/06_face_parsing.ipynb) | [](https://colab.research.google.com/github/yakhyo/uniface/blob/main/examples/06_face_parsing.ipynb) | Semantic face segmentation |

|

||||

| [07_face_anonymization.ipynb](examples/07_face_anonymization.ipynb) | [](https://colab.research.google.com/github/yakhyo/uniface/blob/main/examples/07_face_anonymization.ipynb) | Privacy-preserving blur |

|

||||

| [08_gaze_estimation.ipynb](examples/08_gaze_estimation.ipynb) | [](https://colab.research.google.com/github/yakhyo/uniface/blob/main/examples/08_gaze_estimation.ipynb) | Gaze direction estimation |

|

||||

| [09_face_segmentation.ipynb](examples/09_face_segmentation.ipynb) | [](https://colab.research.google.com/github/yakhyo/uniface/blob/main/examples/09_face_segmentation.ipynb) | Face segmentation with XSeg |

|

||||

| [10_face_vector_store.ipynb](examples/10_face_vector_store.ipynb) | [](https://colab.research.google.com/github/yakhyo/uniface/blob/main/examples/10_face_vector_store.ipynb) | FAISS-backed face database |

|

||||

| [11_head_pose_estimation.ipynb](examples/11_head_pose_estimation.ipynb) | [](https://colab.research.google.com/github/yakhyo/uniface/blob/main/examples/11_head_pose_estimation.ipynb) | Head pose estimation (pitch, yaw, roll) |

|

||||

| [12_face_recognition.ipynb](examples/12_face_recognition.ipynb) | [](https://colab.research.google.com/github/yakhyo/uniface/blob/main/examples/12_face_recognition.ipynb) | Standalone face recognition pipeline |

|

||||

| [13_portrait_matting.ipynb](examples/13_portrait_matting.ipynb) | [](https://colab.research.google.com/github/yakhyo/uniface/blob/main/examples/13_portrait_matting.ipynb) | Portrait matting with MODNet |

|

||||

|

||||

---

|

||||

|

||||

@@ -176,6 +253,20 @@ Full documentation: https://yakhyo.github.io/uniface/

|

||||

|

||||

---

|

||||

|

||||

## Execution Providers (ONNX Runtime)

|

||||

|

||||

```python

|

||||

from uniface.detection import RetinaFace

|

||||

|

||||

# Force CPU-only inference

|

||||

detector = RetinaFace(providers=["CPUExecutionProvider"])

|

||||

```

|

||||

|

||||

See more in the docs:

|

||||

https://yakhyo.github.io/uniface/concepts/execution-providers/

|

||||

|

||||

---

|

||||

|

||||

## Datasets

|

||||

|

||||

| Task | Training Dataset | Models |

|

||||

@@ -184,7 +275,9 @@ Full documentation: https://yakhyo.github.io/uniface/

|

||||

| Recognition | MS1MV2 | MobileFace, SphereFace |

|

||||

| Recognition | WebFace600K | ArcFace |

|

||||

| Recognition | WebFace4M / 12M | AdaFace |

|

||||

| Recognition | MS1MV2 | EdgeFace |

|

||||

| Gaze | Gaze360 | MobileGaze |

|

||||

| Head Pose | 300W-LP | HeadPose (ResNet, MobileNet) |

|

||||

| Parsing | CelebAMask-HQ | BiSeNet |

|

||||

| Attributes | CelebA, FairFace, AffectNet | AgeGender, FairFace, Emotion |

|

||||

|

||||

@@ -192,23 +285,6 @@ Full documentation: https://yakhyo.github.io/uniface/

|

||||

|

||||

---

|

||||

|

||||

## Jupyter Notebooks

|

||||

|

||||

| Example | Colab | Description |

|

||||

|---------|:-----:|-------------|

|

||||

| [01_face_detection.ipynb](examples/01_face_detection.ipynb) | [](https://colab.research.google.com/github/yakhyo/uniface/blob/main/examples/01_face_detection.ipynb) | Face detection and landmarks |

|

||||

| [02_face_alignment.ipynb](examples/02_face_alignment.ipynb) | [](https://colab.research.google.com/github/yakhyo/uniface/blob/main/examples/02_face_alignment.ipynb) | Face alignment for recognition |

|

||||

| [03_face_verification.ipynb](examples/03_face_verification.ipynb) | [](https://colab.research.google.com/github/yakhyo/uniface/blob/main/examples/03_face_verification.ipynb) | Compare faces for identity |

|

||||

| [04_face_search.ipynb](examples/04_face_search.ipynb) | [](https://colab.research.google.com/github/yakhyo/uniface/blob/main/examples/04_face_search.ipynb) | Find a person in group photos |

|

||||

| [05_face_analyzer.ipynb](examples/05_face_analyzer.ipynb) | [](https://colab.research.google.com/github/yakhyo/uniface/blob/main/examples/05_face_analyzer.ipynb) | All-in-one analysis |

|

||||

| [06_face_parsing.ipynb](examples/06_face_parsing.ipynb) | [](https://colab.research.google.com/github/yakhyo/uniface/blob/main/examples/06_face_parsing.ipynb) | Semantic face segmentation |

|

||||

| [07_face_anonymization.ipynb](examples/07_face_anonymization.ipynb) | [](https://colab.research.google.com/github/yakhyo/uniface/blob/main/examples/07_face_anonymization.ipynb) | Privacy-preserving blur |

|

||||

| [08_gaze_estimation.ipynb](examples/08_gaze_estimation.ipynb) | [](https://colab.research.google.com/github/yakhyo/uniface/blob/main/examples/08_gaze_estimation.ipynb) | Gaze direction estimation |

|

||||

| [09_face_segmentation.ipynb](examples/09_face_segmentation.ipynb) | [](https://colab.research.google.com/github/yakhyo/uniface/blob/main/examples/09_face_segmentation.ipynb) | Face segmentation with XSeg |

|

||||

| [10_face_vector_store.ipynb](examples/10_face_vector_store.ipynb) | [](https://colab.research.google.com/github/yakhyo/uniface/blob/main/examples/10_face_vector_store.ipynb) | FAISS-backed face database |

|

||||

|

||||

---

|

||||

|

||||

## Licensing and Model Usage

|

||||

|

||||

UniFace is MIT-licensed, but several pretrained models carry their own licenses.

|

||||

@@ -231,9 +307,12 @@ If you plan commercial use, verify model license compatibility.

|

||||

| Detection | [yolov8-face-onnx-inference](https://github.com/yakhyo/yolov8-face-onnx-inference) | - | YOLOv8-Face ONNX Inference |

|

||||

| Tracking | [bytetrack-tracker](https://github.com/yakhyo/bytetrack-tracker) | - | BYTETracker Multi-Object Tracking |

|

||||

| Recognition | [face-recognition](https://github.com/yakhyo/face-recognition) | ✓ | MobileFace, SphereFace Training |

|

||||

| Recognition | [edgeface-onnx](https://github.com/yakhyo/edgeface-onnx) | - | EdgeFace ONNX Inference |

|

||||

| Parsing | [face-parsing](https://github.com/yakhyo/face-parsing) | ✓ | BiSeNet Face Parsing |

|

||||

| Parsing | [face-segmentation](https://github.com/yakhyo/face-segmentation) | - | XSeg Face Segmentation |

|

||||

| Gaze | [gaze-estimation](https://github.com/yakhyo/gaze-estimation) | ✓ | MobileGaze Training |

|

||||

| Head Pose | [head-pose-estimation](https://github.com/yakhyo/head-pose-estimation) | ✓ | Head Pose Training (6DRepNet-style) |

|

||||

| Matting | [modnet](https://github.com/yakhyo/modnet) | - | MODNet Portrait Matting |

|

||||

| Anti-Spoofing | [face-anti-spoofing](https://github.com/yakhyo/face-anti-spoofing) | - | MiniFASNet Inference |

|

||||

| Attributes | [fairface-onnx](https://github.com/yakhyo/fairface-onnx) | - | FairFace ONNX Inference |

|

||||

|

||||

@@ -257,3 +336,6 @@ Questions or feedback:

|

||||

## License

|

||||

|

||||

This project is licensed under the [MIT License](LICENSE).

|

||||

|

||||

> **Disclaimer:** This project is not affiliated with or related to

|

||||

> [Uniface](https://uniface.com/) by Rocket Software.

|

||||

|

||||

BIN

assets/demos/age_gender.jpg

Normal file

|

After Width: | Height: | Size: 206 KiB |

BIN

assets/demos/anonymization.jpg

Normal file

|

After Width: | Height: | Size: 1.5 MiB |

BIN

assets/demos/detection.jpg

Normal file

|

After Width: | Height: | Size: 341 KiB |

BIN

assets/demos/gaze.jpg

Normal file

|

After Width: | Height: | Size: 212 KiB |

BIN

assets/demos/headpose.jpg

Normal file

|

After Width: | Height: | Size: 233 KiB |

BIN

assets/demos/landmarks.jpg

Normal file

|

After Width: | Height: | Size: 428 KiB |

BIN

assets/demos/matting.jpg

Normal file

|

After Width: | Height: | Size: 938 KiB |

BIN

assets/demos/parsing.jpg

Normal file

|

After Width: | Height: | Size: 712 KiB |

BIN

assets/demos/segmentation.jpg

Normal file

|

After Width: | Height: | Size: 851 KiB |

BIN

assets/demos/src_friends.jpg

Normal file

|

After Width: | Height: | Size: 171 KiB |

BIN

assets/demos/src_man1.jpg

Normal file

|

After Width: | Height: | Size: 63 KiB |

BIN

assets/demos/src_man2.jpg

Normal file

|

After Width: | Height: | Size: 220 KiB |

BIN

assets/demos/src_man3.jpg

Normal file

|

After Width: | Height: | Size: 146 KiB |

BIN

assets/demos/src_meeting.jpg

Normal file

|

After Width: | Height: | Size: 96 KiB |

BIN

assets/demos/src_portrait1.jpg

Normal file

|

After Width: | Height: | Size: 208 KiB |

BIN

assets/demos/verification.jpg

Normal file

|

After Width: | Height: | Size: 121 KiB |

BIN

assets/test_images/image5.jpg

Normal file

|

After Width: | Height: | Size: 5.8 KiB |

@@ -39,16 +39,20 @@ recognizer = ArcFace(providers=['CPUExecutionProvider'])

|

||||

detector = RetinaFace(providers=['CUDAExecutionProvider', 'CPUExecutionProvider'])

|

||||

```

|

||||

|

||||

All model classes accept the `providers` parameter:

|

||||

All **ONNX-based** model classes accept the `providers` parameter:

|

||||

|

||||

- Detection: `RetinaFace`, `SCRFD`, `YOLOv5Face`, `YOLOv8Face`

|

||||

- Recognition: `ArcFace`, `AdaFace`, `MobileFace`, `SphereFace`

|

||||

- Landmarks: `Landmark106`

|

||||

- Gaze: `MobileGaze`

|

||||

- Parsing: `BiSeNet`

|

||||

- Parsing: `BiSeNet`, `XSeg`

|

||||

- Attributes: `AgeGender`, `FairFace`

|

||||

- Anti-Spoofing: `MiniFASNet`

|

||||

|

||||

!!! note "Non-ONNX components"

|

||||

- **Emotion** uses TorchScript and selects its device automatically (`mps` / `cuda` / `cpu`). It does **not** accept the `providers` parameter.

|

||||

- **BlurFace** is a pure OpenCV utility and does not load any model.

|

||||

|

||||

---

|

||||

|

||||

## Check Available Providers

|

||||

@@ -89,7 +93,7 @@ print("Available providers:", providers)

|

||||

No additional setup required. ARM64 optimizations are built into `onnxruntime`:

|

||||

|

||||

```bash

|

||||

pip install uniface

|

||||

pip install uniface[cpu]

|

||||

```

|

||||

|

||||

Verify ARM64:

|

||||

@@ -106,7 +110,7 @@ python -c "import platform; print(platform.machine())"

|

||||

|

||||

### NVIDIA GPU (CUDA)

|

||||

|

||||

Install with GPU support:

|

||||

Install with GPU support (this installs `onnxruntime-gpu`, which already includes CPU fallback):

|

||||

|

||||

```bash

|

||||

pip install uniface[gpu]

|

||||

@@ -136,7 +140,7 @@ else:

|

||||

CPU execution is always available:

|

||||

|

||||

```bash

|

||||

pip install uniface

|

||||

pip install uniface[cpu]

|

||||

```

|

||||

|

||||

Works on all platforms without additional configuration.

|

||||

@@ -211,7 +215,7 @@ for image_path in image_paths:

|

||||

|

||||

3. Reinstall with GPU support:

|

||||

```bash

|

||||

pip uninstall onnxruntime onnxruntime-gpu

|

||||

pip uninstall onnxruntime onnxruntime-gpu -y

|

||||

pip install uniface[gpu]

|

||||

```

|

||||

|

||||

|

||||

@@ -106,6 +106,27 @@ print(f"Yaw: {np.degrees(result.yaw):.1f}°")

|

||||

|

||||

---

|

||||

|

||||

### HeadPoseResult

|

||||

|

||||

```python

|

||||

@dataclass(frozen=True)

|

||||

class HeadPoseResult:

|

||||

pitch: float # Rotation around X-axis (degrees), + = looking down

|

||||

yaw: float # Rotation around Y-axis (degrees), + = looking right

|

||||

roll: float # Rotation around Z-axis (degrees), + = tilting clockwise

|

||||

```

|

||||

|

||||

**Usage:**

|

||||

|

||||

```python

|

||||

result = head_pose.estimate(face_crop)

|

||||

print(f"Pitch: {result.pitch:.1f}°")

|

||||

print(f"Yaw: {result.yaw:.1f}°")

|

||||

print(f"Roll: {result.roll:.1f}°")

|

||||

```

|

||||

|

||||

---

|

||||

|

||||

### SpoofingResult

|

||||

|

||||

```python

|

||||

@@ -144,11 +165,11 @@ class AttributeResult:

|

||||

|

||||

```python

|

||||

# AgeGender model

|

||||

result = age_gender.predict(image, face.bbox)

|

||||

result = age_gender.predict(image, face)

|

||||

print(f"{result.sex}, {result.age} years old")

|

||||

|

||||

# FairFace model

|

||||

result = fairface.predict(image, face.bbox)

|

||||

result = fairface.predict(image, face)

|

||||

print(f"{result.sex}, {result.age_group}, {result.race}")

|

||||

```

|

||||

|

||||

@@ -171,7 +192,7 @@ Face recognition models return normalized 512-dimensional embeddings:

|

||||

|

||||

```python

|

||||

embedding = recognizer.get_normalized_embedding(image, landmarks)

|

||||

print(f"Shape: {embedding.shape}") # (1, 512)

|

||||

print(f"Shape: {embedding.shape}") # (512,)

|

||||

print(f"Norm: {np.linalg.norm(embedding):.4f}") # ~1.0

|

||||

```

|

||||

|

||||

|

||||

@@ -23,8 +23,10 @@ graph TB

|

||||

LMK[Landmarks]

|

||||

ATTR[Attributes]

|

||||

GAZE[Gaze]

|

||||

HPOSE[Head Pose]

|

||||

PARSE[Parsing]

|

||||

SPOOF[Anti-Spoofing]

|

||||

MATT[Matting]

|

||||

PRIV[Privacy]

|

||||

end

|

||||

|

||||

@@ -32,7 +34,7 @@ graph TB

|

||||

TRK[BYTETracker]

|

||||

end

|

||||

|

||||

subgraph Indexing

|

||||

subgraph Stores

|

||||

IDX[FAISS Vector Store]

|

||||

end

|

||||

|

||||

@@ -41,10 +43,12 @@ graph TB

|

||||

end

|

||||

|

||||

IMG --> DET

|

||||

IMG --> MATT

|

||||

DET --> REC

|

||||

DET --> LMK

|

||||

DET --> ATTR

|

||||

DET --> GAZE

|

||||

DET --> HPOSE

|

||||

DET --> PARSE

|

||||

DET --> SPOOF

|

||||

DET --> PRIV

|

||||

@@ -60,16 +64,14 @@ graph TB

|

||||

|

||||

## Design Principles

|

||||

|

||||

### 1. ONNX-First

|

||||

### 1. Cross-Platform Inference

|

||||

|

||||

UniFace runs inference primarily via ONNX Runtime for core components:

|

||||

UniFace uses portable model runtimes to provide consistent inference across macOS, Linux, and Windows. Most core components run through ONNX Runtime, while optional components may use PyTorch where appropriate.

|

||||

|

||||

- **Cross-platform**: Same models work on macOS, Linux, Windows

|

||||

- **Hardware acceleration**: Automatic selection of optimal provider

|

||||

- **Production-ready**: No Python-only dependencies for inference

|

||||

|

||||

Some optional components (e.g., emotion TorchScript, torchvision NMS) require PyTorch.

|

||||

|

||||

### 2. Minimal Dependencies

|

||||

|

||||

Core dependencies are kept minimal:

|

||||

@@ -113,16 +115,18 @@ def detect(self, image: np.ndarray) -> list[Face]:

|

||||

```

|

||||

uniface/

|

||||

├── detection/ # Face detection (RetinaFace, SCRFD, YOLOv5Face, YOLOv8Face)

|

||||

├── recognition/ # Face recognition (AdaFace, ArcFace, MobileFace, SphereFace)

|

||||

├── recognition/ # Face recognition (AdaFace, ArcFace, EdgeFace, MobileFace, SphereFace)

|

||||

├── tracking/ # Multi-object tracking (BYTETracker)

|

||||

├── landmark/ # 106-point landmarks

|

||||

├── attribute/ # Age, gender, emotion, race

|

||||

├── parsing/ # Face semantic segmentation

|

||||

├── matting/ # Portrait matting (MODNet)

|

||||

├── gaze/ # Gaze estimation

|

||||

├── headpose/ # Head pose estimation

|

||||

├── spoofing/ # Anti-spoofing

|

||||

├── privacy/ # Face anonymization

|

||||

├── indexing/ # Vector indexing (FAISS)

|

||||

├── types.py # Dataclasses (Face, GazeResult, etc.)

|

||||

├── stores/ # Vector stores (FAISS)

|

||||

├── types.py # Dataclasses (Face, GazeResult, HeadPoseResult, etc.)

|

||||

├── constants.py # Model weights and URLs

|

||||

├── model_store.py # Model download and caching

|

||||

├── onnx_utils.py # ONNX Runtime utilities

|

||||

@@ -158,7 +162,7 @@ for face in faces:

|

||||

embedding = recognizer.get_normalized_embedding(image, face.landmarks)

|

||||

|

||||

# Attributes

|

||||

attrs = age_gender.predict(image, face.bbox)

|

||||

attrs = age_gender.predict(image, face)

|

||||

|

||||

print(f"Face: {attrs.sex}, {attrs.age} years")

|

||||

```

|

||||

@@ -183,8 +187,7 @@ fairface = FairFace()

|

||||

analyzer = FaceAnalyzer(

|

||||

detector,

|

||||

recognizer=recognizer,

|

||||

age_gender=age_gender,

|

||||

fairface=fairface,

|

||||

attributes=[age_gender, fairface],

|

||||

)

|

||||

|

||||

faces = analyzer.analyze(image)

|

||||

|

||||

@@ -201,17 +201,11 @@ For drawing detections, filter by confidence:

|

||||

```python

|

||||

from uniface.draw import draw_detections

|

||||

|

||||

# Only draw high-confidence detections

|

||||

bboxes = [f.bbox for f in faces if f.confidence > 0.7]

|

||||

scores = [f.confidence for f in faces if f.confidence > 0.7]

|

||||

landmarks = [f.landmarks for f in faces if f.confidence > 0.7]

|

||||

|

||||

# Only draw high-confidence detections (confidence ≥ vis_threshold)

|

||||

draw_detections(

|

||||

image=image,

|

||||

bboxes=bboxes,

|

||||

scores=scores,

|

||||

landmarks=landmarks,

|

||||

vis_threshold=0.6 # Additional visualization filter

|

||||

faces=faces,

|

||||

vis_threshold=0.7,

|

||||

)

|

||||

```

|

||||

|

||||

|

||||

@@ -47,6 +47,38 @@ pre-commit run --all-files

|

||||

|

||||

---

|

||||

|

||||

## Commit Messages

|

||||

|

||||

We follow [Conventional Commits](https://www.conventionalcommits.org/):

|

||||

|

||||

```

|

||||

<type>: <short description>

|

||||

```

|

||||

|

||||

| Type | When to use |

|

||||

|--------------|--------------------------------------------------|

|

||||

| **feat** | New feature or capability |

|

||||

| **fix** | Bug fix |

|

||||

| **docs** | Documentation changes |

|

||||

| **style** | Formatting, whitespace (no logic change) |

|

||||

| **refactor** | Code restructuring without changing behavior |

|

||||

| **perf** | Performance improvement |

|

||||

| **test** | Adding or updating tests |

|

||||

| **build** | Build system or dependencies |

|

||||

| **ci** | CI/CD and pre-commit configuration |

|

||||

| **chore** | Routine maintenance and tooling |

|

||||

|

||||

**Examples:**

|

||||

|

||||

```

|

||||

feat: Add gaze estimation model

|

||||

fix: Correct bounding box scaling for non-square images

|

||||

ci: Add nbstripout pre-commit hook

|

||||

docs: Update installation instructions

|

||||

```

|

||||

|

||||

---

|

||||

|

||||

## Pull Request Process

|

||||

|

||||

1. Fork the repository

|

||||

@@ -67,6 +99,14 @@ pre-commit run --all-files

|

||||

|

||||

---

|

||||

|

||||

## Releases

|

||||

|

||||

Releases are automated via GitHub Actions. Maintainers trigger **Actions → Release Pipeline → Run workflow** with a [PEP 440](https://peps.python.org/pep-0440/) version (e.g. `0.7.0`, `0.7.0rc1`). The pipeline runs tests, bumps `pyproject.toml` + `uniface/__init__.py`, tags the commit, publishes to PyPI, and creates a GitHub Release. Docs redeploy only for stable releases.

|

||||

|

||||

See [CONTRIBUTING.md](https://github.com/yakhyo/uniface/blob/main/CONTRIBUTING.md#release-process) for the full process.

|

||||

|

||||

---

|

||||

|

||||

## Questions?

|

||||

|

||||

Open an issue on [GitHub](https://github.com/yakhyo/uniface/issues).

|

||||

|

||||

@@ -183,6 +183,30 @@ data/

|

||||

|

||||

---

|

||||

|

||||

### Head Pose Estimation

|

||||

|

||||

#### 300W-LP

|

||||

|

||||

Large-scale synthesized face dataset with large pose variations, generated from 300W by face profiling. Used for training head pose estimation models.

|

||||

|

||||

| Property | Value |

|

||||

| ----------- | ----------------------------- |

|

||||

| Images | ~122,000 (synthesized) |

|

||||

| Source | 300W (profiled) |

|

||||

| Pose range | ±90° yaw |

|

||||

| Evaluation | AFLW2000 |

|

||||

| Used by | All HeadPose models |

|

||||

|

||||

!!! info "Download & Reference"

|

||||

**Paper**: [Face Alignment Across Large Poses: A 3D Solution](https://arxiv.org/abs/1511.07212)

|

||||

|

||||

**Training code**: [yakhyo/head-pose-estimation](https://github.com/yakhyo/head-pose-estimation)

|

||||

|

||||

!!! note "UniFace Models"

|

||||

All HeadPose models shipped with UniFace are trained on 300W-LP and evaluated on AFLW2000.

|

||||

|

||||

---

|

||||

|

||||

### Face Parsing

|

||||

|

||||

#### CelebAMask-HQ

|

||||

|

||||

@@ -10,7 +10,7 @@ template: home.html

|

||||

|

||||

# UniFace { .hero-title }

|

||||

|

||||

<p class="hero-subtitle">All-in-One Open-Source Face Analysis Library</p>

|

||||

<p class="hero-subtitle">A Unified Face Analysis Library for Python</p>

|

||||

|

||||

[](https://pypi.org/project/uniface/)

|

||||

[](https://www.python.org/)

|

||||

@@ -20,7 +20,7 @@ template: home.html

|

||||

[](https://www.kaggle.com/yakhyokhuja/code)

|

||||

[](https://discord.gg/wdzrjr7R5j)

|

||||

|

||||

<!-- <img src="https://raw.githubusercontent.com/yakhyo/uniface/main/.github/logos/uniface_rounded_q80.webp" alt="UniFace - All-in-One Open-Source Face Analysis Library" style="max-width: 70%; margin: 1rem 0;"> -->

|

||||

<!-- <img src="https://raw.githubusercontent.com/yakhyo/uniface/main/.github/logos/uniface_rounded_q80.webp" alt="UniFace - A Unified Face Analysis Library for Python" style="max-width: 70%; margin: 1rem 0;"> -->

|

||||

|

||||

[Get Started](quickstart.md){ .md-button .md-button--primary }

|

||||

[View on GitHub](https://github.com/yakhyo/uniface){ .md-button }

|

||||

@@ -31,12 +31,12 @@ template: home.html

|

||||

|

||||

<div class="feature-card" markdown>

|

||||

### :material-face-recognition: Face Detection

|

||||

ONNX-optimized detectors (RetinaFace, SCRFD, YOLO) with 5-point landmarks.

|

||||

RetinaFace, SCRFD, and YOLO detectors with 5-point landmarks.

|

||||

</div>

|

||||

|

||||

<div class="feature-card" markdown>

|

||||

### :material-account-check: Face Recognition

|

||||

AdaFace, ArcFace, MobileFace, and SphereFace embeddings for identity verification.

|

||||

AdaFace, ArcFace, EdgeFace, MobileFace, and SphereFace embeddings for identity verification.

|

||||

</div>

|

||||

|

||||

<div class="feature-card" markdown>

|

||||

@@ -59,6 +59,11 @@ BiSeNet semantic segmentation with 19 facial component classes.

|

||||

Real-time gaze direction prediction with MobileGaze models.

|

||||

</div>

|

||||

|

||||

<div class="feature-card" markdown>

|

||||

### :material-axis-arrow: Head Pose

|

||||

3D head orientation (pitch, yaw, roll) estimation with 6D rotation models.

|

||||

</div>

|

||||

|

||||

<div class="feature-card" markdown>

|

||||

### :material-motion-play: Tracking

|

||||

Multi-object tracking with BYTETracker for persistent face IDs across video frames.

|

||||

@@ -85,14 +90,14 @@ FAISS-backed embedding store for fast multi-identity face search.

|

||||

|

||||

## Installation

|

||||

|

||||

UniFace runs inference primarily via **ONNX Runtime**; some optional components (e.g., emotion TorchScript, torchvision NMS) require **PyTorch**.

|

||||

UniFace uses portable model runtimes for consistent inference across macOS, Linux, and Windows. Most core components run through **ONNX Runtime**, while optional components may use **PyTorch** where appropriate.

|

||||

|

||||

**Standard**

|

||||

**CPU / Apple Silicon**

|

||||

```bash

|

||||

pip install uniface

|

||||

pip install uniface[cpu]

|

||||

```

|

||||

|

||||

**GPU (CUDA)**

|

||||

**GPU (NVIDIA CUDA)**

|

||||

```bash

|

||||

pip install uniface[gpu]

|

||||

```

|

||||

@@ -101,7 +106,7 @@ pip install uniface[gpu]

|

||||

```bash

|

||||

git clone https://github.com/yakhyo/uniface.git

|

||||

cd uniface

|

||||

pip install -e .

|

||||

pip install -e ".[cpu]" # or .[gpu] for CUDA

|

||||

```

|

||||

|

||||

---

|

||||

|

||||

@@ -11,15 +11,27 @@ This guide covers all installation options for UniFace.

|

||||

|

||||

---

|

||||

|

||||

## Why Two Extras?

|

||||

|

||||

`onnxruntime` (CPU) and `onnxruntime-gpu` (CUDA) both own the same Python namespace.

|

||||

Installing both at the same time causes file conflicts and silent provider mismatches.

|

||||

UniFace exposes them as separate, mutually exclusive extras so you install exactly one.

|

||||

|

||||

---

|

||||

|

||||

## Quick Install

|

||||

|

||||

The simplest way to install UniFace:

|

||||

=== "CPU / Apple Silicon"

|

||||

|

||||

```bash

|

||||

pip install uniface

|

||||

```

|

||||

```bash

|

||||

pip install uniface[cpu]

|

||||

```

|

||||

|

||||

This installs the CPU version with all core dependencies.

|

||||

=== "NVIDIA GPU (CUDA)"

|

||||

|

||||

```bash

|

||||

pip install uniface[gpu]

|

||||

```

|

||||

|

||||

---

|

||||

|

||||

@@ -27,14 +39,16 @@ This installs the CPU version with all core dependencies.

|

||||

|

||||

### macOS (Apple Silicon - M1/M2/M3/M4)

|

||||

|

||||

For Apple Silicon Macs, the standard installation automatically includes ARM64 optimizations:

|

||||

The `[cpu]` extra pulls in the standard `onnxruntime` package, which has native ARM64 support

|

||||

built in since version 1.13. No additional setup is needed for CoreML acceleration.

|

||||

|

||||

```bash

|

||||

pip install uniface

|

||||

pip install uniface[cpu]

|

||||

```

|

||||

|

||||

!!! tip "Native Performance"

|

||||

The base `onnxruntime` package has native Apple Silicon support with ARM64 optimizations built-in since version 1.13+. No additional configuration needed.

|

||||

`onnxruntime` 1.13+ includes ARM64 optimizations out of the box.

|

||||

UniFace automatically detects and enables `CoreMLExecutionProvider` on Apple Silicon.

|

||||

|

||||

Verify ARM64 installation:

|

||||

|

||||

@@ -47,18 +61,22 @@ python -c "import platform; print(platform.machine())"

|

||||

|

||||

### Linux/Windows with NVIDIA GPU

|

||||

|

||||

For CUDA acceleration on NVIDIA GPUs:

|

||||

|

||||

```bash

|

||||

pip install uniface[gpu]

|

||||

```

|

||||

|

||||

This installs `onnxruntime-gpu`, which includes both `CUDAExecutionProvider` and

|

||||

`CPUExecutionProvider` — no separate CPU package is needed.

|

||||

|

||||

**Requirements:**

|

||||

|

||||

- `uniface[gpu]` automatically installs `onnxruntime-gpu`. Requirements depend on the ORT version and execution provider.

|

||||

- NVIDIA driver compatible with your CUDA version

|

||||

- CUDA 11.x or 12.x toolkit

|

||||

- cuDNN 8.x

|

||||

|

||||

!!! info "CUDA Compatibility"

|

||||

See the [ONNX Runtime GPU compatibility matrix](https://onnxruntime.ai/docs/execution-providers/CUDA-ExecutionProvider.html) for matching CUDA and cuDNN versions.

|

||||

See the [ONNX Runtime GPU compatibility matrix](https://onnxruntime.ai/docs/execution-providers/CUDA-ExecutionProvider.html)

|

||||

for matching CUDA and cuDNN versions.

|

||||

|

||||

Verify GPU installation:

|

||||

|

||||

@@ -70,23 +88,10 @@ print("Available providers:", ort.get_available_providers())

|

||||

|

||||

---

|

||||

|

||||

### FAISS Vector Indexing

|

||||

|

||||

For fast multi-identity face search using a FAISS index:

|

||||

|

||||

```bash

|

||||

pip install faiss-cpu # CPU

|

||||

pip install faiss-gpu # NVIDIA GPU (CUDA)

|

||||