Compare commits

5 Commits

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

9bf54f5f78 | ||

|

|

c87ec1ad0f | ||

|

|

9e56a86963 | ||

|

|

426bd71505 | ||

|

|

ede8b27091 |

2

.github/workflows/ci.yml

vendored

@@ -33,6 +33,8 @@ jobs:

|

||||

matrix:

|

||||

include:

|

||||

# Full Python range on Linux (fastest runner)

|

||||

- os: ubuntu-latest

|

||||

python-version: "3.10"

|

||||

- os: ubuntu-latest

|

||||

python-version: "3.11"

|

||||

- os: ubuntu-latest

|

||||

|

||||

2

.github/workflows/publish.yml

vendored

@@ -54,7 +54,7 @@ jobs:

|

||||

strategy:

|

||||

fail-fast: false

|

||||

matrix:

|

||||

python-version: ["3.11", "3.13"]

|

||||

python-version: ["3.10", "3.11", "3.13"]

|

||||

|

||||

steps:

|

||||

- name: Checkout code

|

||||

|

||||

@@ -18,6 +18,13 @@ repos:

|

||||

- id: debug-statements

|

||||

- id: check-ast

|

||||

|

||||

# Strip Jupyter notebook outputs

|

||||

- repo: https://github.com/kynan/nbstripout

|

||||

rev: 0.9.1

|

||||

hooks:

|

||||

- id: nbstripout

|

||||

files: ^examples/

|

||||

|

||||

# Ruff - Fast Python linter and formatter

|

||||

- repo: https://github.com/astral-sh/ruff-pre-commit

|

||||

rev: v0.14.10

|

||||

|

||||

6

AGENTS.md

Normal file

@@ -0,0 +1,6 @@

|

||||

<!-- Cursor agent instructions — shared with CLAUDE.md -->

|

||||

<!-- See CLAUDE.md for full project instructions for AI coding agents. -->

|

||||

|

||||

# AGENTS.md

|

||||

|

||||

Please read and follow all instructions in [CLAUDE.md](./CLAUDE.md).

|

||||

81

CLAUDE.md

Normal file

@@ -0,0 +1,81 @@

|

||||

# CLAUDE.md

|

||||

|

||||

Project instructions for AI coding agents.

|

||||

|

||||

## Project Overview

|

||||

|

||||

UniFace is a Python library for face detection, recognition, tracking, landmark analysis, face parsing, gaze estimation, age/gender detection. It uses ONNX Runtime for inference.

|

||||

|

||||

## Code Style

|

||||

|

||||

- Python 3.10+ with type hints

|

||||

- Line length: 120

|

||||

- Single quotes for strings, double quotes for docstrings

|

||||

- Google-style docstrings

|

||||

- Formatter/linter: Ruff (config in `pyproject.toml`)

|

||||

- Run `ruff format .` and `ruff check . --fix` before committing

|

||||

|

||||

## Commit Messages

|

||||

|

||||

Follow [Conventional Commits](https://www.conventionalcommits.org/) with a **capitalized** description:

|

||||

|

||||

```

|

||||

<type>: <Capitalized short description>

|

||||

```

|

||||

|

||||

Types: `feat`, `fix`, `docs`, `style`, `refactor`, `perf`, `test`, `build`, `ci`, `chore`

|

||||

|

||||

Examples:

|

||||

- `feat: Add gaze estimation model`

|

||||

- `fix: Correct bounding box scaling for non-square images`

|

||||

- `ci: Add nbstripout pre-commit hook`

|

||||

- `docs: Update installation instructions`

|

||||

- `refactor: Unify attribute/detector base classes`

|

||||

|

||||

## Testing

|

||||

|

||||

```bash

|

||||

pytest -v --tb=short

|

||||

```

|

||||

|

||||

Tests live in `tests/`. Run the full suite before submitting changes.

|

||||

|

||||

## Pre-commit

|

||||

|

||||

Pre-commit hooks handle formatting, linting, security checks, and notebook output stripping. Always run:

|

||||

|

||||

```bash

|

||||

pre-commit install

|

||||

pre-commit run --all-files

|

||||

```

|

||||

|

||||

## Project Structure

|

||||

|

||||

```

|

||||

uniface/ # Main package

|

||||

detection/ # Face detection models (SCRFD, RetinaFace, YOLOv5, YOLOv8)

|

||||

recognition/ # Face recognition/verification (AdaFace, ArcFace, EdgeFace, MobileFace, SphereFace)

|

||||

landmark/ # Facial landmark models

|

||||

tracking/ # Object tracking (ByteTrack)

|

||||

parsing/ # Face parsing/segmentation (BiSeNet, XSeg)

|

||||

gaze/ # Gaze estimation

|

||||

headpose/ # Head pose estimation

|

||||

attribute/ # Age, gender, emotion detection

|

||||

spoofing/ # Anti-spoofing (MiniFASNet)

|

||||

privacy/ # Face anonymization

|

||||

stores/ # Vector stores (FAISS)

|

||||

constants.py # Model weight URLs and checksums

|

||||

model_store.py # Model download/cache management

|

||||

analyzer.py # High-level FaceAnalyzer API

|

||||

types.py # Shared type definitions

|

||||

tests/ # Unit tests

|

||||

examples/ # Jupyter notebooks (outputs are auto-stripped)

|

||||

docs/ # MkDocs documentation

|

||||

```

|

||||

|

||||

## Key Conventions

|

||||

|

||||

- New models: add class in submodule, register weights in `constants.py`, export in `__init__.py`

|

||||

- Dependencies: managed in `pyproject.toml`

|

||||

- All ONNX models are downloaded on demand with SHA256 verification

|

||||

- Do not commit notebook outputs; `nbstripout` pre-commit hook handles this

|

||||

145

README.md

@@ -3,7 +3,7 @@

|

||||

<div align="center">

|

||||

|

||||

[](https://pypi.org/project/uniface/)

|

||||

[](https://www.python.org/)

|

||||

[](https://www.python.org/)

|

||||

[](https://opensource.org/licenses/MIT)

|

||||

[](https://github.com/yakhyo/uniface/actions)

|

||||

[](https://pepy.tech/projects/uniface)

|

||||

@@ -26,20 +26,50 @@

|

||||

## Features

|

||||

|

||||

- **Face Detection** — RetinaFace, SCRFD, YOLOv5-Face, and YOLOv8-Face with 5-point landmarks

|

||||

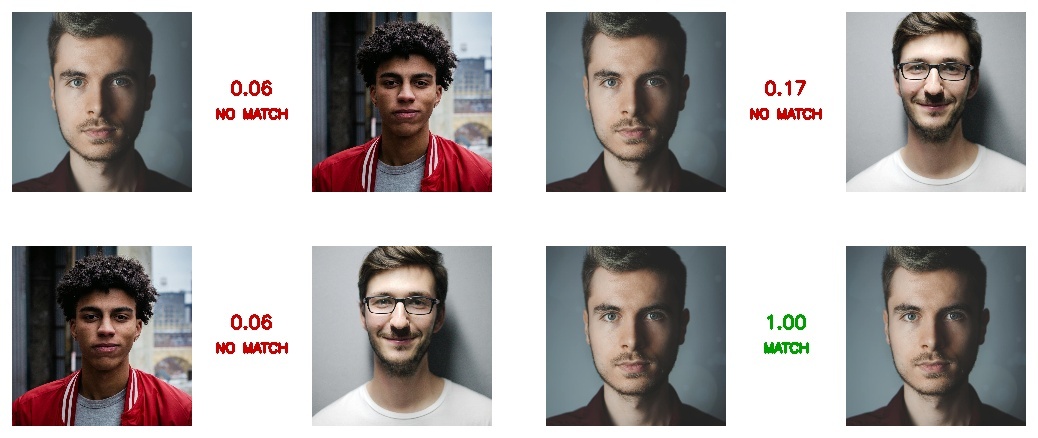

- **Face Recognition** — ArcFace, MobileFace, and SphereFace embeddings

|

||||

- **Face Recognition** — AdaFace, ArcFace, EdgeFace, MobileFace, and SphereFace embeddings

|

||||

- **Face Tracking** — Multi-object tracking with [BYTETracker](https://github.com/yakhyo/bytetrack-tracker) for persistent IDs across video frames

|

||||

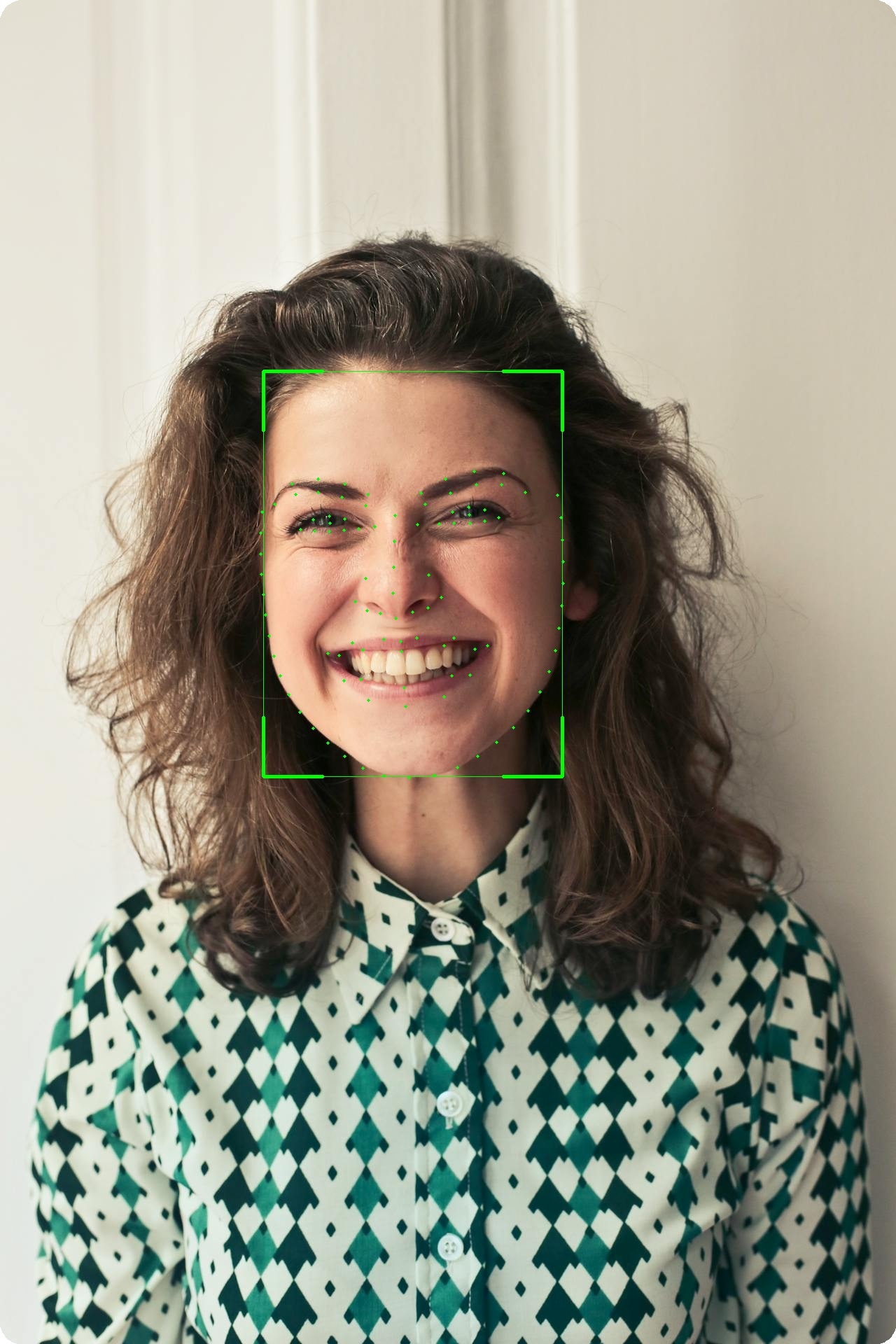

- **Facial Landmarks** — 106-point landmark localization module (separate from 5-point detector landmarks)

|

||||

- **Face Parsing** — BiSeNet semantic segmentation (19 classes), XSeg face masking

|

||||

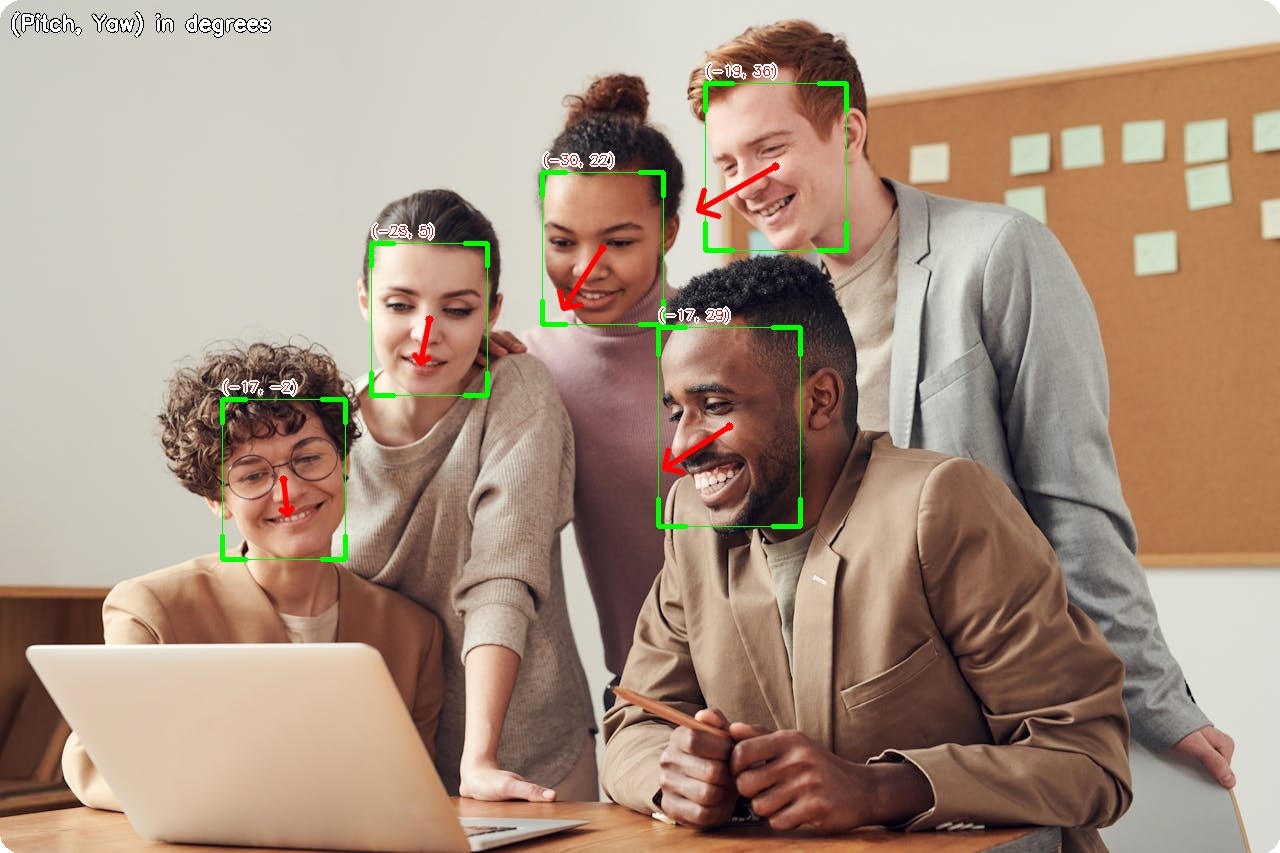

- **Gaze Estimation** — Real-time gaze direction with MobileGaze

|

||||

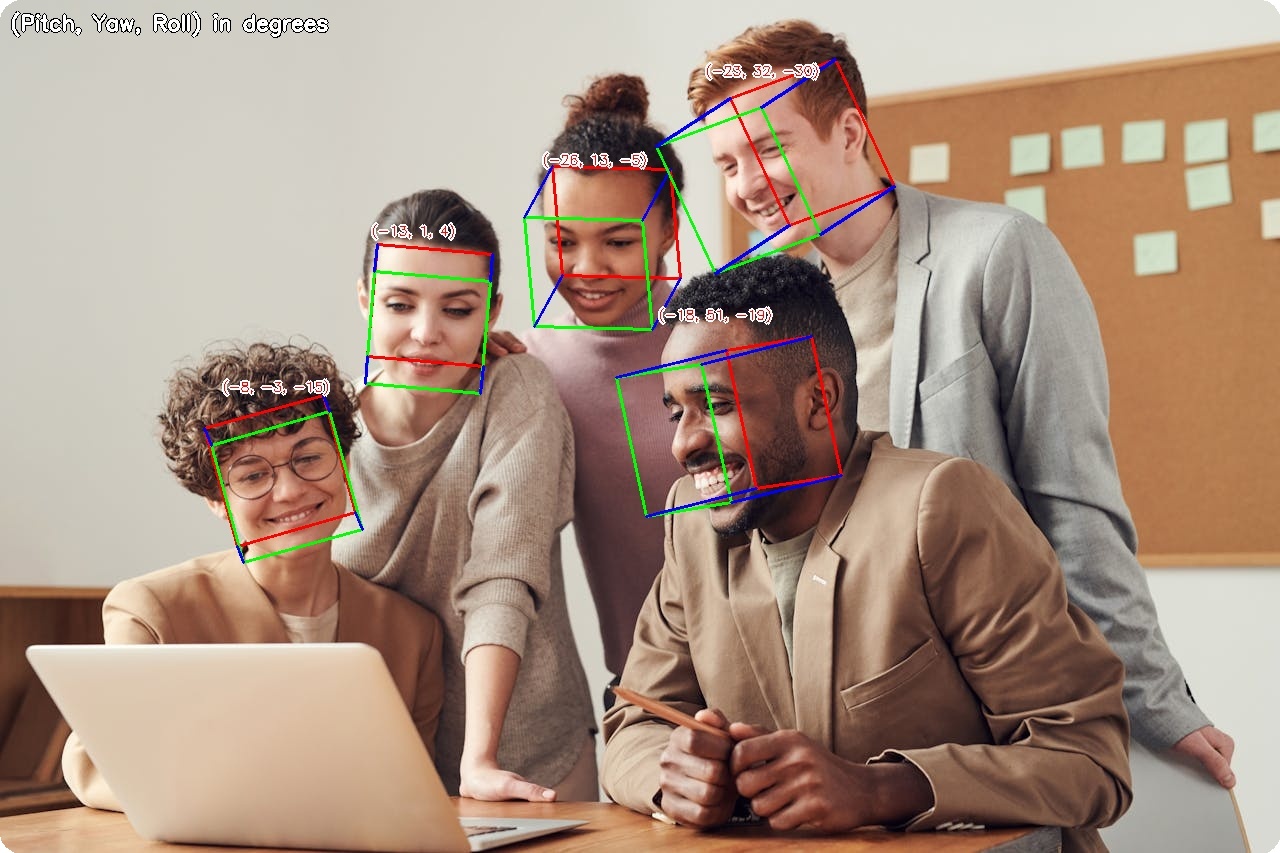

- **Head Pose Estimation** — 3D head orientation (pitch, yaw, roll) with 6D rotation representation

|

||||

- **Attribute Analysis** — Age, gender, race (FairFace), and emotion

|

||||

- **Vector Indexing** — FAISS-backed embedding store for fast multi-identity search

|

||||

- **Vector Store** — FAISS-backed embedding store for fast multi-identity search

|

||||

- **Anti-Spoofing** — Face liveness detection with MiniFASNet

|

||||

- **Face Anonymization** — 5 blur methods for privacy protection

|

||||

- **Hardware Acceleration** — ARM64 (Apple Silicon), CUDA (NVIDIA), CPU

|

||||

|

||||

---

|

||||

|

||||

## Visual Examples

|

||||

|

||||

<table>

|

||||

<tr>

|

||||

<td align="center"><b>Face Detection</b><br><img src="https://raw.githubusercontent.com/yakhyo/uniface/main/assets/demos/detection.jpg" width="100%"></td>

|

||||

<td align="center"><b>Gaze Estimation</b><br><img src="https://raw.githubusercontent.com/yakhyo/uniface/main/assets/demos/gaze.jpg" width="100%"></td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td align="center"><b>Head Pose Estimation</b><br><img src="https://raw.githubusercontent.com/yakhyo/uniface/main/assets/demos/headpose.jpg" width="100%"></td>

|

||||

<td align="center"><b>Age & Gender</b><br><img src="https://raw.githubusercontent.com/yakhyo/uniface/main/assets/demos/age_gender.jpg" width="100%"></td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td align="center" colspan="2"><b>Face Verification</b><br><img src="https://raw.githubusercontent.com/yakhyo/uniface/main/assets/demos/verification.jpg" width="80%"></td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td align="center" colspan="2"><b>106-Point Landmarks</b><br><img src="https://raw.githubusercontent.com/yakhyo/uniface/main/assets/demos/landmarks.jpg" width="36%"></td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td align="center" colspan="2"><b>Face Parsing</b><br><img src="https://raw.githubusercontent.com/yakhyo/uniface/main/assets/demos/parsing.jpg" width="80%"></td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td align="center" colspan="2"><b>Face Segmentation</b><br><img src="https://raw.githubusercontent.com/yakhyo/uniface/main/assets/demos/segmentation.jpg" width="80%"></td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td align="center" colspan="2"><b>Face Anonymization</b><br><img src="https://raw.githubusercontent.com/yakhyo/uniface/main/assets/demos/anonymization.jpg" width="100%"></td>

|

||||

</tr>

|

||||

</table>

|

||||

|

||||

---

|

||||

|

||||

## Installation

|

||||

|

||||

**Standard installation**

|

||||

@@ -61,7 +91,7 @@ git clone https://github.com/yakhyo/uniface.git

|

||||

cd uniface && pip install -e .

|

||||

```

|

||||

|

||||

**FAISS vector indexing**

|

||||

**FAISS vector store**

|

||||

|

||||

```bash

|

||||

pip install faiss-cpu # or faiss-gpu for CUDA

|

||||

@@ -127,14 +157,10 @@ for face in faces:

|

||||

|

||||

```python

|

||||

import cv2

|

||||

from uniface.analyzer import FaceAnalyzer

|

||||

from uniface.detection import RetinaFace

|

||||

from uniface.recognition import ArcFace

|

||||

from uniface import FaceAnalyzer

|

||||

|

||||

detector = RetinaFace()

|

||||

recognizer = ArcFace()

|

||||

|

||||

analyzer = FaceAnalyzer(detector, recognizer=recognizer)

|

||||

# Zero-config: uses SCRFD (500M) + ArcFace (MobileNet) by default

|

||||

analyzer = FaceAnalyzer()

|

||||

|

||||

image = cv2.imread("photo.jpg")

|

||||

if image is None:

|

||||

@@ -146,52 +172,18 @@ for face in faces:

|

||||

print(face.bbox, face.embedding.shape if face.embedding is not None else None)

|

||||

```

|

||||

|

||||

---

|

||||

|

||||

## Execution Providers (ONNX Runtime)

|

||||

With attributes:

|

||||

|

||||

```python

|

||||

from uniface.detection import RetinaFace

|

||||

from uniface import FaceAnalyzer, AgeGender

|

||||

|

||||

# Force CPU-only inference

|

||||

detector = RetinaFace(providers=["CPUExecutionProvider"])

|

||||

analyzer = FaceAnalyzer(attributes=[AgeGender()])

|

||||

faces = analyzer.analyze(image)

|

||||

|

||||

for face in faces:

|

||||

print(f"{face.sex}, {face.age}y, embedding={face.embedding.shape}")

|

||||

```

|

||||

|

||||

See more in the docs:

|

||||

https://yakhyo.github.io/uniface/concepts/execution-providers/

|

||||

|

||||

---

|

||||

|

||||

## Documentation

|

||||

|

||||

Full documentation: https://yakhyo.github.io/uniface/

|

||||

|

||||

| Resource | Description |

|

||||

|----------|-------------|

|

||||

| [Quickstart](https://yakhyo.github.io/uniface/quickstart/) | Get up and running in 5 minutes |

|

||||

| [Model Zoo](https://yakhyo.github.io/uniface/models/) | All models, benchmarks, and selection guide |

|

||||

| [API Reference](https://yakhyo.github.io/uniface/modules/detection/) | Detailed module documentation |

|

||||

| [Tutorials](https://yakhyo.github.io/uniface/recipes/image-pipeline/) | Step-by-step workflow examples |

|

||||

| [Guides](https://yakhyo.github.io/uniface/concepts/overview/) | Architecture and design principles |

|

||||

| [Datasets](https://yakhyo.github.io/uniface/datasets/) | Training data and evaluation benchmarks |

|

||||

|

||||

---

|

||||

|

||||

## Datasets

|

||||

|

||||

| Task | Training Dataset | Models |

|

||||

|------|-----------------|--------|

|

||||

| Detection | WIDER FACE | RetinaFace, SCRFD, YOLOv5-Face, YOLOv8-Face |

|

||||

| Recognition | MS1MV2 | MobileFace, SphereFace |

|

||||

| Recognition | WebFace600K | ArcFace |

|

||||

| Recognition | WebFace4M / 12M | AdaFace |

|

||||

| Gaze | Gaze360 | MobileGaze |

|

||||

| Head Pose | 300W-LP | HeadPose (ResNet, MobileNet) |

|

||||

| Parsing | CelebAMask-HQ | BiSeNet |

|

||||

| Attributes | CelebA, FairFace, AffectNet | AgeGender, FairFace, Emotion |

|

||||

|

||||

> See [Datasets documentation](https://yakhyo.github.io/uniface/datasets/) for download links, benchmarks, and details.

|

||||

|

||||

---

|

||||

|

||||

## Jupyter Notebooks

|

||||

@@ -209,6 +201,54 @@ Full documentation: https://yakhyo.github.io/uniface/

|

||||

| [09_face_segmentation.ipynb](examples/09_face_segmentation.ipynb) | [](https://colab.research.google.com/github/yakhyo/uniface/blob/main/examples/09_face_segmentation.ipynb) | Face segmentation with XSeg |

|

||||

| [10_face_vector_store.ipynb](examples/10_face_vector_store.ipynb) | [](https://colab.research.google.com/github/yakhyo/uniface/blob/main/examples/10_face_vector_store.ipynb) | FAISS-backed face database |

|

||||

| [11_head_pose_estimation.ipynb](examples/11_head_pose_estimation.ipynb) | [](https://colab.research.google.com/github/yakhyo/uniface/blob/main/examples/11_head_pose_estimation.ipynb) | Head pose estimation (pitch, yaw, roll) |

|

||||

| [12_face_recognition.ipynb](examples/12_face_recognition.ipynb) | [](https://colab.research.google.com/github/yakhyo/uniface/blob/main/examples/12_face_recognition.ipynb) | Standalone face recognition pipeline |

|

||||

|

||||

---

|

||||

|

||||

## Documentation

|

||||

|

||||

Full documentation: https://yakhyo.github.io/uniface/

|

||||

|

||||

| Resource | Description |

|

||||

|----------|-------------|

|

||||

| [Quickstart](https://yakhyo.github.io/uniface/quickstart/) | Get up and running in 5 minutes |

|

||||

| [Model Zoo](https://yakhyo.github.io/uniface/models/) | All models, benchmarks, and selection guide |

|

||||

| [API Reference](https://yakhyo.github.io/uniface/modules/detection/) | Detailed module documentation |

|

||||

| [Tutorials](https://yakhyo.github.io/uniface/recipes/image-pipeline/) | Step-by-step workflow examples |

|

||||

| [Guides](https://yakhyo.github.io/uniface/concepts/overview/) | Architecture and design principles |

|

||||

| [Datasets](https://yakhyo.github.io/uniface/datasets/) | Training data and evaluation benchmarks |

|

||||

|

||||

---

|

||||

|

||||

## Execution Providers (ONNX Runtime)

|

||||

|

||||

```python

|

||||

from uniface.detection import RetinaFace

|

||||

|

||||

# Force CPU-only inference

|

||||

detector = RetinaFace(providers=["CPUExecutionProvider"])

|

||||

```

|

||||

|

||||

See more in the docs:

|

||||

https://yakhyo.github.io/uniface/concepts/execution-providers/

|

||||

|

||||

---

|

||||

|

||||

## Datasets

|

||||

|

||||

| Task | Training Dataset | Models |

|

||||

|------|-----------------|--------|

|

||||

| Detection | WIDER FACE | RetinaFace, SCRFD, YOLOv5-Face, YOLOv8-Face |

|

||||

| Recognition | MS1MV2 | MobileFace, SphereFace |

|

||||

| Recognition | WebFace600K | ArcFace |

|

||||

| Recognition | WebFace4M / 12M | AdaFace |

|

||||

| Recognition | MS1MV2 | EdgeFace |

|

||||

| Gaze | Gaze360 | MobileGaze |

|

||||

| Head Pose | 300W-LP | HeadPose (ResNet, MobileNet) |

|

||||

| Parsing | CelebAMask-HQ | BiSeNet |

|

||||

| Attributes | CelebA, FairFace, AffectNet | AgeGender, FairFace, Emotion |

|

||||

|

||||

> See [Datasets documentation](https://yakhyo.github.io/uniface/datasets/) for download links, benchmarks, and details.

|

||||

|

||||

---

|

||||

|

||||

@@ -234,6 +274,7 @@ If you plan commercial use, verify model license compatibility.

|

||||

| Detection | [yolov8-face-onnx-inference](https://github.com/yakhyo/yolov8-face-onnx-inference) | - | YOLOv8-Face ONNX Inference |

|

||||

| Tracking | [bytetrack-tracker](https://github.com/yakhyo/bytetrack-tracker) | - | BYTETracker Multi-Object Tracking |

|

||||

| Recognition | [face-recognition](https://github.com/yakhyo/face-recognition) | ✓ | MobileFace, SphereFace Training |

|

||||

| Recognition | [edgeface-onnx](https://github.com/yakhyo/edgeface-onnx) | - | EdgeFace ONNX Inference |

|

||||

| Parsing | [face-parsing](https://github.com/yakhyo/face-parsing) | ✓ | BiSeNet Face Parsing |

|

||||

| Parsing | [face-segmentation](https://github.com/yakhyo/face-segmentation) | - | XSeg Face Segmentation |

|

||||

| Gaze | [gaze-estimation](https://github.com/yakhyo/gaze-estimation) | ✓ | MobileGaze Training |

|

||||

|

||||

BIN

assets/demos/age_gender.jpg

Normal file

|

After Width: | Height: | Size: 206 KiB |

BIN

assets/demos/anonymization.jpg

Normal file

|

After Width: | Height: | Size: 1.5 MiB |

BIN

assets/demos/detection.jpg

Normal file

|

After Width: | Height: | Size: 341 KiB |

BIN

assets/demos/gaze.jpg

Normal file

|

After Width: | Height: | Size: 212 KiB |

BIN

assets/demos/headpose.jpg

Normal file

|

After Width: | Height: | Size: 233 KiB |

BIN

assets/demos/landmarks.jpg

Normal file

|

After Width: | Height: | Size: 428 KiB |

BIN

assets/demos/parsing.jpg

Normal file

|

After Width: | Height: | Size: 712 KiB |

BIN

assets/demos/segmentation.jpg

Normal file

|

After Width: | Height: | Size: 851 KiB |

BIN

assets/demos/src_friends.jpg

Normal file

|

After Width: | Height: | Size: 171 KiB |

BIN

assets/demos/src_man1.jpg

Normal file

|

After Width: | Height: | Size: 63 KiB |

BIN

assets/demos/src_man2.jpg

Normal file

|

After Width: | Height: | Size: 220 KiB |

BIN

assets/demos/src_man3.jpg

Normal file

|

After Width: | Height: | Size: 146 KiB |

BIN

assets/demos/src_meeting.jpg

Normal file

|

After Width: | Height: | Size: 96 KiB |

BIN

assets/demos/src_portrait1.jpg

Normal file

|

After Width: | Height: | Size: 208 KiB |

BIN

assets/demos/verification.jpg

Normal file

|

After Width: | Height: | Size: 121 KiB |

BIN

assets/test_images/image5.jpg

Normal file

|

After Width: | Height: | Size: 5.8 KiB |

@@ -33,7 +33,7 @@ graph TB

|

||||

TRK[BYTETracker]

|

||||

end

|

||||

|

||||

subgraph Indexing

|

||||

subgraph Stores

|

||||

IDX[FAISS Vector Store]

|

||||

end

|

||||

|

||||

@@ -115,7 +115,7 @@ def detect(self, image: np.ndarray) -> list[Face]:

|

||||

```

|

||||

uniface/

|

||||

├── detection/ # Face detection (RetinaFace, SCRFD, YOLOv5Face, YOLOv8Face)

|

||||

├── recognition/ # Face recognition (AdaFace, ArcFace, MobileFace, SphereFace)

|

||||

├── recognition/ # Face recognition (AdaFace, ArcFace, EdgeFace, MobileFace, SphereFace)

|

||||

├── tracking/ # Multi-object tracking (BYTETracker)

|

||||

├── landmark/ # 106-point landmarks

|

||||

├── attribute/ # Age, gender, emotion, race

|

||||

@@ -124,7 +124,7 @@ uniface/

|

||||

├── headpose/ # Head pose estimation

|

||||

├── spoofing/ # Anti-spoofing

|

||||

├── privacy/ # Face anonymization

|

||||

├── indexing/ # Vector indexing (FAISS)

|

||||

├── stores/ # Vector stores (FAISS)

|

||||

├── types.py # Dataclasses (Face, GazeResult, HeadPoseResult, etc.)

|

||||

├── constants.py # Model weights and URLs

|

||||

├── model_store.py # Model download and caching

|

||||

|

||||

@@ -201,17 +201,11 @@ For drawing detections, filter by confidence:

|

||||

```python

|

||||

from uniface.draw import draw_detections

|

||||

|

||||

# Only draw high-confidence detections

|

||||

bboxes = [f.bbox for f in faces if f.confidence > 0.7]

|

||||

scores = [f.confidence for f in faces if f.confidence > 0.7]

|

||||

landmarks = [f.landmarks for f in faces if f.confidence > 0.7]

|

||||

|

||||

# Only draw high-confidence detections (confidence ≥ vis_threshold)

|

||||

draw_detections(

|

||||

image=image,

|

||||

bboxes=bboxes,

|

||||

scores=scores,

|

||||

landmarks=landmarks,

|

||||

vis_threshold=0.6 # Additional visualization filter

|

||||

faces=faces,

|

||||

vis_threshold=0.7,

|

||||

)

|

||||

```

|

||||

|

||||

|

||||

@@ -32,7 +32,7 @@ ruff check . --fix

|

||||

**Guidelines:**

|

||||

|

||||

- Line length: 120

|

||||

- Python 3.11+ type hints

|

||||

- Python 3.10+ type hints

|

||||

- Google-style docstrings

|

||||

|

||||

---

|

||||

@@ -47,6 +47,38 @@ pre-commit run --all-files

|

||||

|

||||

---

|

||||

|

||||

## Commit Messages

|

||||

|

||||

We follow [Conventional Commits](https://www.conventionalcommits.org/):

|

||||

|

||||

```

|

||||

<type>: <short description>

|

||||

```

|

||||

|

||||

| Type | When to use |

|

||||

|--------------|--------------------------------------------------|

|

||||

| **feat** | New feature or capability |

|

||||

| **fix** | Bug fix |

|

||||

| **docs** | Documentation changes |

|

||||

| **style** | Formatting, whitespace (no logic change) |

|

||||

| **refactor** | Code restructuring without changing behavior |

|

||||

| **perf** | Performance improvement |

|

||||

| **test** | Adding or updating tests |

|

||||

| **build** | Build system or dependencies |

|

||||

| **ci** | CI/CD and pre-commit configuration |

|

||||

| **chore** | Routine maintenance and tooling |

|

||||

|

||||

**Examples:**

|

||||

|

||||

```

|

||||

feat: Add gaze estimation model

|

||||

fix: Correct bounding box scaling for non-square images

|

||||

ci: Add nbstripout pre-commit hook

|

||||

docs: Update installation instructions

|

||||

```

|

||||

|

||||

---

|

||||

|

||||

## Pull Request Process

|

||||

|

||||

1. Fork the repository

|

||||

|

||||

@@ -13,7 +13,7 @@ template: home.html

|

||||

<p class="hero-subtitle">All-in-One Open-Source Face Analysis Library</p>

|

||||

|

||||

[](https://pypi.org/project/uniface/)

|

||||

[](https://www.python.org/)

|

||||

[](https://www.python.org/)

|

||||

[](https://opensource.org/licenses/MIT)

|

||||

[](https://github.com/yakhyo/uniface/actions)

|

||||

[](https://pepy.tech/projects/uniface)

|

||||

@@ -36,7 +36,7 @@ ONNX-optimized detectors (RetinaFace, SCRFD, YOLO) with 5-point landmarks.

|

||||

|

||||

<div class="feature-card" markdown>

|

||||

### :material-account-check: Face Recognition

|

||||

AdaFace, ArcFace, MobileFace, and SphereFace embeddings for identity verification.

|

||||

AdaFace, ArcFace, EdgeFace, MobileFace, and SphereFace embeddings for identity verification.

|

||||

</div>

|

||||

|

||||

<div class="feature-card" markdown>

|

||||

|

||||

@@ -6,7 +6,7 @@ This guide covers all installation options for UniFace.

|

||||

|

||||

## Requirements

|

||||

|

||||

- **Python**: 3.11 or higher

|

||||

- **Python**: 3.10 or higher

|

||||

- **Operating Systems**: macOS, Linux, Windows

|

||||

|

||||

---

|

||||

@@ -70,16 +70,16 @@ print("Available providers:", ort.get_available_providers())

|

||||

|

||||

---

|

||||

|

||||

### FAISS Vector Indexing

|

||||

### FAISS Vector Store

|

||||

|

||||

For fast multi-identity face search using a FAISS index:

|

||||

For fast multi-identity face search using a FAISS vector store:

|

||||

|

||||

```bash

|

||||

pip install faiss-cpu # CPU

|

||||

pip install faiss-gpu # NVIDIA GPU (CUDA)

|

||||

```

|

||||

|

||||

See the [Indexing module](modules/indexing.md) for usage.

|

||||

See the [Stores module](modules/stores.md) for usage.

|

||||

|

||||

---

|

||||

|

||||

@@ -128,7 +128,7 @@ UniFace has minimal dependencies:

|

||||

|

||||

| Package | Install extra | Purpose |

|

||||

|---------|---------------|---------|

|

||||

| `faiss-cpu` / `faiss-gpu` | `pip install faiss-cpu` | FAISS vector indexing |

|

||||

| `faiss-cpu` / `faiss-gpu` | `pip install faiss-cpu` | FAISS vector store |

|

||||

| `onnxruntime-gpu` | `uniface[gpu]` | CUDA acceleration |

|

||||

| `torch` | `pip install torch` | Emotion model uses TorchScript |

|

||||

| `torchvision` | `pip install torchvision` | Faster NMS for YOLO detectors |

|

||||

@@ -159,11 +159,11 @@ print("Installation successful!")

|

||||

|

||||

### Import Errors

|

||||

|

||||

If you encounter import errors, ensure you're using Python 3.11+:

|

||||

If you encounter import errors, ensure you're using Python 3.10+:

|

||||

|

||||

```bash

|

||||

python --version

|

||||

# Should show: Python 3.11.x or higher

|

||||

# Should show: Python 3.10.x or higher

|

||||

```

|

||||

|

||||

### Model Download Issues

|

||||

|

||||

@@ -156,6 +156,24 @@ Face recognition using angular softmax loss.

|

||||

|

||||

---

|

||||

|

||||

### EdgeFace

|

||||

|

||||

Efficient face recognition designed for edge devices, using EdgeNeXt backbone with optional LoRA compression.

|

||||

|

||||

| Model Name | Backbone | Params | MFLOPs | Size | LFW | CALFW | CPLFW | CFP-FP | AgeDB-30 |

|

||||

| --------------- | -------- | ------ | ------ | ----- | ------ | ------ | ------ | ------ | -------- |

|

||||

| `XXS` :material-check-circle: | EdgeNeXt | 1.24M | 94 | ~5 MB | 99.57% | 94.83% | 90.27% | 93.63% | 94.92% |

|

||||

| `XS_GAMMA_06` | EdgeNeXt | 1.77M | 154 | ~7 MB | 99.73% | 95.28% | 91.58% | 94.71% | 96.08% |

|

||||

| `S_GAMMA_05` | EdgeNeXt | 3.65M | 306 | ~14 MB | 99.78% | 95.55% | 92.48% | 95.74% | 97.03% |

|

||||

| `BASE` | EdgeNeXt | 18.2M | 1399 | ~70 MB | 99.83% | 96.07% | 93.75% | 97.01% | 97.60% |

|

||||

|

||||

!!! info "Training Data & Reference"

|

||||

**Paper**: [EdgeFace: Efficient Face Recognition Model for Edge Devices](https://arxiv.org/abs/2307.01838v2) (IEEE T-BIOM 2024)

|

||||

|

||||

**Source**: [github.com/otroshi/edgeface](https://github.com/otroshi/edgeface) | [github.com/yakhyo/edgeface-onnx](https://github.com/yakhyo/edgeface-onnx)

|

||||

|

||||

---

|

||||

|

||||

## Facial Landmark Models

|

||||

|

||||

### 106-Point Landmark Detection

|

||||

|

||||

@@ -2,6 +2,11 @@

|

||||

|

||||

Facial attribute analysis for age, gender, race, and emotion detection.

|

||||

|

||||

<figure markdown="span">

|

||||

{ width="100%" }

|

||||

<figcaption>Age and gender prediction with detection bounding boxes</figcaption>

|

||||

</figure>

|

||||

|

||||

---

|

||||

|

||||

## Available Models

|

||||

|

||||

@@ -2,6 +2,11 @@

|

||||

|

||||

Face detection is the first step in any face analysis pipeline. UniFace provides four detection models.

|

||||

|

||||

<figure markdown="span">

|

||||

{ width="100%" }

|

||||

<figcaption>SCRFD detection with corner-style bounding boxes and 5-point landmarks</figcaption>

|

||||

</figure>

|

||||

|

||||

---

|

||||

|

||||

## Available Models

|

||||

@@ -264,10 +269,8 @@ from uniface.draw import draw_detections

|

||||

|

||||

draw_detections(

|

||||

image=image,

|

||||

bboxes=[f.bbox for f in faces],

|

||||

scores=[f.confidence for f in faces],

|

||||

landmarks=[f.landmarks for f in faces],

|

||||

vis_threshold=0.6

|

||||

faces=faces,

|

||||

vis_threshold=0.6,

|

||||

)

|

||||

|

||||

cv2.imwrite("result.jpg", image)

|

||||

|

||||

@@ -2,6 +2,11 @@

|

||||

|

||||

Gaze estimation predicts where a person is looking (pitch and yaw angles).

|

||||

|

||||

<figure markdown="span">

|

||||

{ width="100%" }

|

||||

<figcaption>Gaze direction arrows with pitch/yaw angle labels</figcaption>

|

||||

</figure>

|

||||

|

||||

---

|

||||

|

||||

## Available Models

|

||||

|

||||

@@ -2,6 +2,11 @@

|

||||

|

||||

Head pose estimation predicts the 3D orientation of a person's head (pitch, yaw, and roll angles).

|

||||

|

||||

<figure markdown="span">

|

||||

{ width="100%" }

|

||||

<figcaption>3D head pose visualization with pitch, yaw, and roll angles</figcaption>

|

||||

</figure>

|

||||

|

||||

---

|

||||

|

||||

## Available Models

|

||||

|

||||

@@ -2,6 +2,11 @@

|

||||

|

||||

Facial landmark detection provides precise localization of facial features.

|

||||

|

||||

<figure markdown="span">

|

||||

{ width="50%" }

|

||||

<figcaption>106-point facial landmark localization</figcaption>

|

||||

</figure>

|

||||

|

||||

---

|

||||

|

||||

## Available Models

|

||||

|

||||

@@ -2,6 +2,16 @@

|

||||

|

||||

Face parsing segments faces into semantic components or face regions.

|

||||

|

||||

<figure markdown="span">

|

||||

{ width="80%" }

|

||||

<figcaption>BiSeNet face parsing with 19 semantic component classes</figcaption>

|

||||

</figure>

|

||||

|

||||

<figure markdown="span">

|

||||

{ width="80%" }

|

||||

<figcaption>XSeg face region segmentation mask</figcaption>

|

||||

</figure>

|

||||

|

||||

---

|

||||

|

||||

## Available Models

|

||||

|

||||

@@ -2,6 +2,11 @@

|

||||

|

||||

Face anonymization protects privacy by blurring or obscuring faces in images and videos.

|

||||

|

||||

<figure markdown="span">

|

||||

{ width="100%" }

|

||||

<figcaption>Five anonymization methods: pixelate, gaussian, blackout, elliptical, and median</figcaption>

|

||||

</figure>

|

||||

|

||||

---

|

||||

|

||||

## Available Methods

|

||||

|

||||

@@ -2,6 +2,11 @@

|

||||

|

||||

Face recognition extracts embeddings for identity verification and face search.

|

||||

|

||||

<figure markdown="span">

|

||||

{ width="80%" }

|

||||

<figcaption>Pairwise face verification with cosine similarity scores</figcaption>

|

||||

</figure>

|

||||

|

||||

---

|

||||

|

||||

## Available Models

|

||||

@@ -10,6 +15,7 @@ Face recognition extracts embeddings for identity verification and face search.

|

||||

|-------|----------|------|---------------|

|

||||

| **AdaFace** | IR-18/IR-101 | 92-249 MB | 512 |

|

||||

| **ArcFace** | MobileNet/ResNet | 8-166 MB | 512 |

|

||||

| **EdgeFace** | EdgeNeXt/LoRA | 5-70 MB | 512 |

|

||||

| **MobileFace** | MobileNet V2/V3 | 1-10 MB | 512 |

|

||||

| **SphereFace** | Sphere20/36 | 50-92 MB | 512 |

|

||||

|

||||

@@ -113,6 +119,64 @@ recognizer = ArcFace(providers=['CPUExecutionProvider'])

|

||||

|

||||

---

|

||||

|

||||

## EdgeFace

|

||||

|

||||

Efficient face recognition designed for edge devices, using an EdgeNeXt backbone with optional LoRA low-rank compression. Competition-winning entry (compact track) at EFaR 2023, IJCB.

|

||||

|

||||

### Basic Usage

|

||||

|

||||

```python

|

||||

from uniface.detection import RetinaFace

|

||||

from uniface.recognition import EdgeFace

|

||||

|

||||

detector = RetinaFace()

|

||||

recognizer = EdgeFace()

|

||||

|

||||

# Detect face

|

||||

faces = detector.detect(image)

|

||||

|

||||

# Extract embedding

|

||||

if faces:

|

||||

embedding = recognizer.get_normalized_embedding(image, faces[0].landmarks)

|

||||

print(f"Embedding shape: {embedding.shape}") # (512,)

|

||||

```

|

||||

|

||||

### Model Variants

|

||||

|

||||

```python

|

||||

from uniface.recognition import EdgeFace

|

||||

from uniface.constants import EdgeFaceWeights

|

||||

|

||||

# Ultra-compact (default)

|

||||

recognizer = EdgeFace(model_name=EdgeFaceWeights.XXS)

|

||||

|

||||

# Compact with LoRA

|

||||

recognizer = EdgeFace(model_name=EdgeFaceWeights.XS_GAMMA_06)

|

||||

|

||||

# Small with LoRA

|

||||

recognizer = EdgeFace(model_name=EdgeFaceWeights.S_GAMMA_05)

|

||||

|

||||

# Full-size

|

||||

recognizer = EdgeFace(model_name=EdgeFaceWeights.BASE)

|

||||

|

||||

# Force CPU execution

|

||||

recognizer = EdgeFace(providers=['CPUExecutionProvider'])

|

||||

```

|

||||

|

||||

| Variant | Params | MFLOPs | Size | LFW | CALFW | CPLFW | CFP-FP | AgeDB-30 |

|

||||

|---------|--------|--------|------|-----|-------|-------|--------|----------|

|

||||

| **XXS** :material-check-circle: | 1.24M | 94 | ~5 MB | 99.57% | 94.83% | 90.27% | 93.63% | 94.92% |

|

||||

| XS_GAMMA_06 | 1.77M | 154 | ~7 MB | 99.73% | 95.28% | 91.58% | 94.71% | 96.08% |

|

||||

| S_GAMMA_05 | 3.65M | 306 | ~14 MB | 99.78% | 95.55% | 92.48% | 95.74% | 97.03% |

|

||||

| BASE | 18.2M | 1399 | ~70 MB | 99.83% | 96.07% | 93.75% | 97.01% | 97.60% |

|

||||

|

||||

!!! info "Reference"

|

||||

**Paper**: [EdgeFace: Efficient Face Recognition Model for Edge Devices](https://arxiv.org/abs/2307.01838v2) (IEEE T-BIOM 2024)

|

||||

|

||||

**Source**: [github.com/otroshi/edgeface](https://github.com/otroshi/edgeface)

|

||||

|

||||

---

|

||||

|

||||

## MobileFace

|

||||

|

||||

Lightweight face recognition models with MobileNet backbones.

|

||||

@@ -287,9 +351,10 @@ else:

|

||||

```python

|

||||

from uniface.recognition import create_recognizer

|

||||

|

||||

# Available methods: 'arcface', 'adaface', 'mobileface', 'sphereface'

|

||||

# Available methods: 'arcface', 'adaface', 'edgeface', 'mobileface', 'sphereface'

|

||||

recognizer = create_recognizer('arcface')

|

||||

recognizer = create_recognizer('adaface')

|

||||

recognizer = create_recognizer('edgeface')

|

||||

```

|

||||

|

||||

---

|

||||

|

||||

@@ -1,4 +1,4 @@

|

||||

# Indexing

|

||||

# Stores

|

||||

|

||||

FAISS-backed vector store for fast similarity search over embeddings.

|

||||

|

||||

@@ -12,7 +12,7 @@ FAISS-backed vector store for fast similarity search over embeddings.

|

||||

## FAISS

|

||||

|

||||

```python

|

||||

from uniface.indexing import FAISS

|

||||

from uniface.stores import FAISS

|

||||

```

|

||||

|

||||

A thin wrapper around a FAISS `IndexFlatIP` (inner-product) index. Vectors

|

||||

@@ -134,7 +134,7 @@ loaded = store.load() # True if files exist

|

||||

import cv2

|

||||

from uniface.detection import RetinaFace

|

||||

from uniface.recognition import ArcFace

|

||||

from uniface.indexing import FAISS

|

||||

from uniface.stores import FAISS

|

||||

|

||||

detector = RetinaFace()

|

||||

recognizer = ArcFace()

|

||||

@@ -19,6 +19,7 @@ Run UniFace examples directly in your browser with Google Colab, or download and

|

||||

| [Face Segmentation](https://github.com/yakhyo/uniface/blob/main/examples/09_face_segmentation.ipynb) | [](https://colab.research.google.com/github/yakhyo/uniface/blob/main/examples/09_face_segmentation.ipynb) | Face segmentation with XSeg |

|

||||

| [Face Vector Store](https://github.com/yakhyo/uniface/blob/main/examples/10_face_vector_store.ipynb) | [](https://colab.research.google.com/github/yakhyo/uniface/blob/main/examples/10_face_vector_store.ipynb) | FAISS-backed face database |

|

||||

| [Head Pose Estimation](https://github.com/yakhyo/uniface/blob/main/examples/11_head_pose_estimation.ipynb) | [](https://colab.research.google.com/github/yakhyo/uniface/blob/main/examples/11_head_pose_estimation.ipynb) | 3D head orientation estimation |

|

||||

| [Face Recognition](https://github.com/yakhyo/uniface/blob/main/examples/12_face_recognition.ipynb) | [](https://colab.research.google.com/github/yakhyo/uniface/blob/main/examples/12_face_recognition.ipynb) | Standalone face recognition pipeline |

|

||||

|

||||

---

|

||||

|

||||

|

||||

@@ -54,19 +54,8 @@ detector = RetinaFace()

|

||||

image = cv2.imread("photo.jpg")

|

||||

faces = detector.detect(image)

|

||||

|

||||

# Extract visualization data

|

||||

bboxes = [f.bbox for f in faces]

|

||||

scores = [f.confidence for f in faces]

|

||||

landmarks = [f.landmarks for f in faces]

|

||||

|

||||

# Draw on image

|

||||

draw_detections(

|

||||

image=image,

|

||||

bboxes=bboxes,

|

||||

scores=scores,

|

||||

landmarks=landmarks,

|

||||

vis_threshold=0.6,

|

||||

)

|

||||

draw_detections(image=image, faces=faces, vis_threshold=0.6)

|

||||

|

||||

# Save result

|

||||

cv2.imwrite("output.jpg", image)

|

||||

@@ -372,10 +361,7 @@ while True:

|

||||

|

||||

faces = detector.detect(frame)

|

||||

|

||||

bboxes = [f.bbox for f in faces]

|

||||

scores = [f.confidence for f in faces]

|

||||

landmarks = [f.landmarks for f in faces]

|

||||

draw_detections(image=frame, bboxes=bboxes, scores=scores, landmarks=landmarks)

|

||||

draw_detections(image=frame, faces=faces)

|

||||

|

||||

cv2.imshow("UniFace - Press 'q' to quit", frame)

|

||||

|

||||

@@ -507,7 +493,7 @@ from uniface.privacy import BlurFace

|

||||

from uniface.spoofing import MiniFASNet

|

||||

from uniface.tracking import BYTETracker

|

||||

from uniface.analyzer import FaceAnalyzer

|

||||

from uniface.indexing import FAISS # pip install faiss-cpu

|

||||

from uniface.stores import FAISS # pip install faiss-cpu

|

||||

from uniface.draw import draw_detections, draw_tracks

|

||||

```

|

||||

|

||||

|

||||

@@ -52,7 +52,7 @@ python tools/search.py --reference ref.jpg --source 0 # webcam

|

||||

## Vector Search (FAISS index)

|

||||

|

||||

For identifying faces against a database of many known people, use the

|

||||

[`FAISS`](../modules/indexing.md) vector store.

|

||||

[`FAISS`](../modules/stores.md) vector store.

|

||||

|

||||

!!! info "Install extra"

|

||||

`bash

|

||||

@@ -80,7 +80,7 @@ import cv2

|

||||

from pathlib import Path

|

||||

from uniface.detection import RetinaFace

|

||||

from uniface.recognition import ArcFace

|

||||

from uniface.indexing import FAISS

|

||||

from uniface.stores import FAISS

|

||||

|

||||

detector = RetinaFace()

|

||||

recognizer = ArcFace()

|

||||

@@ -112,7 +112,7 @@ python tools/faiss_search.py build --faces-dir dataset/ --db-path ./my_index

|

||||

import cv2

|

||||

from uniface.detection import RetinaFace

|

||||

from uniface.recognition import ArcFace

|

||||

from uniface.indexing import FAISS

|

||||

from uniface.stores import FAISS

|

||||

|

||||

detector = RetinaFace()

|

||||

recognizer = ArcFace()

|

||||

@@ -143,7 +143,7 @@ python tools/faiss_search.py run --db-path ./my_index --source 0 # webcam

|

||||

### Manage the index

|

||||

|

||||

```python

|

||||

from uniface.indexing import FAISS

|

||||

from uniface.stores import FAISS

|

||||

|

||||

store = FAISS(db_path="./my_index")

|

||||

store.load()

|

||||

@@ -160,7 +160,7 @@ store.save()

|

||||

|

||||

## See Also

|

||||

|

||||

- [Indexing Module](../modules/indexing.md) - Full `FAISS` API reference

|

||||

- [Stores Module](../modules/stores.md) - Full `FAISS` API reference

|

||||

- [Recognition Module](../modules/recognition.md) - Face recognition details

|

||||

- [Video & Webcam](video-webcam.md) - Real-time processing

|

||||

- [Concepts: Thresholds](../concepts/thresholds-calibration.md) - Tuning similarity thresholds

|

||||

|

||||

@@ -48,12 +48,7 @@ def process_image(image_path):

|

||||

print(f" Face {i+1}: {attrs.sex}, {attrs.age} years old")

|

||||

|

||||

# Visualize

|

||||

draw_detections(

|

||||

image=image,

|

||||

bboxes=[f.bbox for f in faces],

|

||||

scores=[f.confidence for f in faces],

|

||||

landmarks=[f.landmarks for f in faces]

|

||||

)

|

||||

draw_detections(image=image, faces=faces)

|

||||

|

||||

return image, results

|

||||

|

||||

|

||||

@@ -26,12 +26,7 @@ while True:

|

||||

|

||||

faces = detector.detect(frame)

|

||||

|

||||

draw_detections(

|

||||

image=frame,

|

||||

bboxes=[f.bbox for f in faces],

|

||||

scores=[f.confidence for f in faces],

|

||||

landmarks=[f.landmarks for f in faces]

|

||||

)

|

||||

draw_detections(image=frame, faces=faces)

|

||||

|

||||

cv2.imshow("Face Detection", frame)

|

||||

|

||||

|

||||

356

examples/12_face_recognition.ipynb

Normal file

@@ -0,0 +1,356 @@

|

||||

{

|

||||

"cells": [

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"id": "0",

|

||||

"metadata": {},

|

||||

"source": [

|

||||

"# Face Recognition: RetinaFace → Align → ArcFace\n",

|

||||

"\n",

|

||||

"<div style=\"display:flex; flex-wrap:wrap; align-items:center;\">\n",

|

||||

" <a style=\"margin-right:10px; margin-bottom:6px;\" href=\"https://pepy.tech/projects/uniface\"><img alt=\"PyPI Downloads\" src=\"https://static.pepy.tech/personalized-badge/uniface?period=total&units=international_system&left_color=grey&right_color=blue&left_text=Downloads\"></a>\n",

|

||||

" <a style=\"margin-right:10px; margin-bottom:6px;\" href=\"https://pypi.org/project/uniface/\"><img alt=\"PyPI Version\" src=\"https://img.shields.io/pypi/v/uniface.svg\"></a>\n",

|

||||

" <a style=\"margin-right:10px; margin-bottom:6px;\" href=\"https://opensource.org/licenses/MIT\"><img alt=\"License\" src=\"https://img.shields.io/badge/License-MIT-blue.svg\"></a>\n",

|

||||

" <a style=\"margin-bottom:6px;\" href=\"https://github.com/yakhyo/uniface\"><img alt=\"GitHub Stars\" src=\"https://img.shields.io/github/stars/yakhyo/uniface.svg?style=social\"></a>\n",

|

||||

"</div>\n",

|

||||

"\n",

|

||||

"**UniFace** is a lightweight, production-ready, all-in-one face analysis library built on ONNX Runtime.\n",

|

||||

"\n",

|

||||

"🔗 **GitHub**: [github.com/yakhyo/uniface](https://github.com/yakhyo/uniface) | 📚 **Docs**: [yakhyo.github.io/uniface](https://yakhyo.github.io/uniface)\n",

|

||||

"\n",

|

||||

"---\n",

|

||||

"\n",

|

||||

"This notebook demonstrates face recognition **without** the high-level `FaceAnalyzer` wrapper. Each step is handled manually:\n",

|

||||

"\n",

|

||||

"1. **RetinaFace**: Detects faces and extracts 5-point landmarks.\n",

|

||||

"2. **Face Alignment**: Warps each face into a standardized 112x112 crop using the landmarks.\n",

|

||||

"3. **ArcFace**: Generates a 512-D L2-normalized embedding from the aligned crop.\n",

|

||||

"\n",

|

||||

"We compare three test images: `image0.jpg`, `image1.jpg`, and `image5.jpg`."

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"id": "1",

|

||||

"metadata": {},

|

||||

"source": [

|

||||

"## 1. Install UniFace"

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "code",

|

||||

"execution_count": null,

|

||||

"id": "2",

|

||||

"metadata": {},

|

||||

"outputs": [],

|

||||

"source": [

|

||||

"%pip install -q uniface\n",

|

||||

"\n",

|

||||

"# Clone repo for assets (Colab only)\n",

|

||||

"import os\n",

|

||||

"if 'COLAB_GPU' in os.environ or 'COLAB_RELEASE_TAG' in os.environ:\n",

|

||||

" if not os.path.exists('uniface'):\n",

|

||||

" !git clone --depth 1 https://github.com/yakhyo/uniface.git\n",

|

||||

" os.chdir('uniface/examples')"

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"id": "3",

|

||||

"metadata": {},

|

||||

"source": [

|

||||

"## 2. Import Libraries"

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "code",

|

||||

"execution_count": null,

|

||||

"id": "4",

|

||||

"metadata": {},

|

||||

"outputs": [],

|

||||

"source": [

|

||||

"import cv2\n",

|

||||

"import numpy as np\n",

|

||||

"import matplotlib.pyplot as plt\n",

|

||||

"import matplotlib.patches as patches\n",

|

||||

"\n",

|

||||

"import uniface\n",

|

||||

"from uniface.detection import RetinaFace\n",

|

||||

"from uniface.recognition import ArcFace\n",

|

||||

"from uniface.face_utils import face_alignment\n",

|

||||

"\n",

|

||||

"print(f\"UniFace version: {uniface.__version__}\")"

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"id": "5",

|

||||

"metadata": {},

|

||||

"source": [

|

||||

"## 3. Configuration"

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "code",

|

||||

"execution_count": null,

|

||||

"id": "6",

|

||||

"metadata": {},

|

||||

"outputs": [],

|

||||

"source": [

|

||||

"IMAGE_PATHS = {\n",

|

||||

" \"image0\": \"../assets/test_images/image0.jpg\",\n",

|

||||

" \"image1\": \"../assets/test_images/image1.jpg\",\n",

|

||||

" \"image5\": \"../assets/test_images/image5.jpg\",\n",

|

||||

"}\n",

|

||||

"THRESHOLD = 0.4 # Cosine similarity threshold for \"same person\""

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"id": "7",

|

||||

"metadata": {},

|

||||

"source": [

|

||||

"## 4. Initialize Models"

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "code",

|

||||

"execution_count": null,

|

||||

"id": "8",

|

||||

"metadata": {},

|

||||

"outputs": [],

|

||||

"source": [

|

||||

"detector = RetinaFace(confidence_threshold=0.5)\n",

|

||||

"recognizer = ArcFace()"

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"id": "9",

|

||||

"metadata": {},

|

||||

"source": [

|

||||

"## 5. Load Images & Detect Faces\n",

|

||||

"\n",

|

||||

"We use the detector to find faces and their landmarks in each image."

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "code",

|

||||

"execution_count": null,

|

||||

"id": "10",

|

||||

"metadata": {},

|

||||

"outputs": [],

|

||||

"source": [

|

||||

"images = {}\n",

|

||||

"faces = {}\n",

|

||||

"\n",

|

||||

"for name, path in IMAGE_PATHS.items():\n",

|

||||

" img = cv2.imread(path)\n",

|

||||

" if img is None:\n",

|

||||

" raise FileNotFoundError(f\"Cannot read: {path}\")\n",

|

||||

"\n",

|

||||

" detected = detector.detect(img)\n",

|

||||

" if not detected:\n",

|

||||

" raise RuntimeError(f\"No face detected in: {path}\")\n",

|

||||

"\n",

|

||||

" images[name] = img\n",

|

||||

" faces[name] = detected[0] # Keep highest-confidence face\n",

|

||||

" print(f\"{name:8s} | {len(detected)} face(s) detected | confidence={faces[name].confidence:.3f}\")"

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"id": "11",

|

||||

"metadata": {},

|

||||

"source": [

|

||||

"## 6. Visualize Detections"

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "code",

|

||||

"execution_count": null,

|

||||

"id": "12",

|

||||

"metadata": {},

|

||||

"outputs": [],

|

||||

"source": [

|

||||

"LM_COLORS = [\"red\", \"blue\", \"green\", \"cyan\", \"magenta\"]\n",

|

||||

"\n",

|

||||

"fig, axes = plt.subplots(1, 3, figsize=(15, 5))\n",

|

||||

"fig.suptitle(\"Detected Faces & 5-Point Landmarks\", fontweight=\"bold\", fontsize=16)\n",

|

||||

"\n",

|

||||

"for ax, (name, img) in zip(axes, images.items()):\n",

|

||||

" face = faces[name]\n",

|

||||

" ax.imshow(cv2.cvtColor(img, cv2.COLOR_BGR2RGB))\n",

|

||||

" ax.set_title(f\"{name}\\nconf={face.confidence:.3f}\", fontsize=12)\n",

|

||||

" ax.axis(\"off\")\n",

|

||||

"\n",

|

||||

" # Bounding box\n",

|

||||

" x1, y1, x2, y2 = face.bbox.astype(int)\n",

|

||||

" ax.add_patch(patches.Rectangle(\n",

|

||||

" (x1, y1), x2 - x1, y2 - y1,\n",

|

||||

" linewidth=2, edgecolor=\"lime\", facecolor=\"none\"))\n",

|

||||

"\n",

|

||||

" # Landmarks\n",

|

||||

" for (lx, ly), c in zip(face.landmarks, LM_COLORS):\n",

|

||||

" ax.plot(lx, ly, \"o\", color=c, markersize=6)\n",

|

||||

"\n",

|

||||

"plt.tight_layout()\n",

|

||||

"plt.show()"

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"id": "13",

|

||||

"metadata": {},

|

||||

"source": [

|

||||

"## 7. Face Alignment\n",

|

||||

"\n",

|

||||

"We warp the detected faces into a standardized 112x112 size. This improves recognition accuracy."

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "code",

|

||||

"execution_count": null,

|

||||

"id": "14",

|

||||

"metadata": {},

|

||||

"outputs": [],

|

||||

"source": [

|

||||

"aligned = {}\n",

|

||||

"\n",

|

||||

"for name, img in images.items():\n",

|

||||

" lm = faces[name].landmarks\n",

|

||||

" crop, _ = face_alignment(img, lm, image_size=(112, 112))\n",

|

||||

" aligned[name] = crop\n",

|

||||

"\n",

|

||||

"fig, axes = plt.subplots(1, 3, figsize=(12, 4))\n",

|

||||

"fig.suptitle(\"Aligned Face Crops (112x112)\", fontweight=\"bold\", fontsize=14)\n",

|

||||

"\n",

|

||||

"for ax, (name, crop) in zip(axes, aligned.items()):\n",

|

||||

" ax.imshow(cv2.cvtColor(crop, cv2.COLOR_BGR2RGB))\n",

|

||||

" ax.set_title(name, fontsize=12)\n",

|

||||

" ax.axis(\"off\")\n",

|

||||

"\n",

|

||||

"plt.tight_layout()\n",

|

||||

"plt.show()"

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"id": "15",

|

||||

"metadata": {},

|

||||

"source": [

|

||||

"## 8. Extract Embeddings\n",

|

||||

"\n",

|

||||

"We pass the aligned crops to ArcFace to get the 512-D vectors."

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "code",

|

||||

"execution_count": null,

|

||||

"id": "16",

|

||||

"metadata": {},

|

||||

"outputs": [],

|

||||

"source": [

|

||||

"embeddings = {}\n",

|

||||

"\n",

|

||||

"for name, crop in aligned.items():\n",

|

||||

" # landmarks=None because image is already aligned\n",

|

||||

" emb = recognizer.get_normalized_embedding(crop, landmarks=None)\n",

|

||||

" embeddings[name] = emb\n",

|

||||

" print(f\"{name:8s} | embedding shape={emb.shape} | L2-norm={np.linalg.norm(emb):.4f}\")"

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"id": "17",

|

||||

"metadata": {},

|

||||

"source": [

|

||||

"## 9. Pairwise Cosine Similarity\n",

|

||||

"\n",

|

||||

"Since embeddings are normalized, cosine similarity is just the dot product."

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "code",

|

||||

"execution_count": null,

|

||||

"id": "18",

|

||||

"metadata": {},

|

||||

"outputs": [],

|

||||

"source": [

|

||||

"names = list(embeddings.keys())\n",

|

||||

"n = len(names)\n",

|

||||

"sim_matrix = np.zeros((n, n))\n",

|

||||

"\n",

|

||||

"for i, ni in enumerate(names):\n",

|

||||

" for j, nj in enumerate(names):\n",

|

||||

" # Use squeeze() to handle (1, 512) shapes if present\n",

|

||||

" sim_matrix[i, j] = float(np.dot(embeddings[ni].squeeze(), embeddings[nj].squeeze()))\n",

|

||||

"\n",

|

||||

"# Print comparison results\n",

|

||||

"pairs = [(names[i], names[j]) for i in range(n) for j in range(i + 1, n)]\n",

|

||||

"for a, b in pairs:\n",

|

||||

" s = float(np.dot(embeddings[a].squeeze(), embeddings[b].squeeze()))\n",

|

||||

" verdict = \"✓ Same person\" if s >= THRESHOLD else \"✗ Different people\"\n",

|

||||

" print(f\"{a} vs {b}: similarity={s:.4f} → {verdict}\")"

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"id": "19",

|

||||

"metadata": {},

|

||||

"source": [

|

||||

"## 10. Similarity Heatmap"

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "code",

|

||||

"execution_count": null,

|

||||

"id": "20",

|

||||

"metadata": {},

|

||||

"outputs": [],

|

||||

"source": [

|

||||

"fig, ax = plt.subplots(figsize=(8, 6))\n",

|

||||

"im = ax.imshow(sim_matrix, vmin=0, vmax=1, cmap=\"viridis\")\n",

|

||||

"plt.colorbar(im, ax=ax, label=\"Cosine similarity\")\n",

|

||||

"\n",

|

||||

"ax.set_xticks(range(n))\n",

|

||||

"ax.set_yticks(range(n))\n",

|

||||

"ax.set_xticklabels(names, rotation=30, ha=\"right\")\n",

|

||||

"ax.set_yticklabels(names)\n",

|

||||

"ax.set_title(\"Pairwise Face Similarity (ArcFace)\", fontweight=\"bold\")\n",

|

||||

"\n",

|

||||

"for i in range(n):\n",

|

||||

" for j in range(n):\n",

|

||||

" val = sim_matrix[i, j]\n",

|

||||

" ax.text(j, i, f\"{val:.2f}\",\n",

|

||||

" ha=\"center\", va=\"center\",\n",

|

||||

" color=\"black\" if val >= 0.6 else \"white\",\n",

|

||||

" fontsize=12, fontweight=\"bold\")\n",

|

||||

"\n",

|

||||

"plt.tight_layout()\n",

|

||||

"plt.show()"

|

||||

]

|

||||

}

|

||||

],

|

||||

"metadata": {

|

||||

"kernelspec": {

|

||||

"display_name": "base",

|

||||

"language": "python",

|

||||

"name": "python3"

|

||||

},

|

||||

"language_info": {

|

||||

"codemirror_mode": {

|

||||

"name": "ipython",

|

||||

"version": 3

|

||||

},

|

||||

"file_extension": ".py",

|

||||

"mimetype": "text/x-python",

|

||||

"name": "python",

|

||||

"nbconvert_exporter": "python",

|

||||

"pygments_lexer": "ipython3",

|

||||

"version": "3.13.5"

|

||||

}

|

||||

},

|

||||

"nbformat": 4,

|

||||

"nbformat_minor": 5

|

||||

}

|

||||

@@ -154,7 +154,7 @@ nav:

|

||||

- Head Pose: modules/headpose.md

|

||||

- Anti-Spoofing: modules/spoofing.md

|

||||

- Privacy: modules/privacy.md

|

||||

- Indexing: modules/indexing.md

|

||||

- Stores: modules/stores.md

|

||||

- Guides:

|

||||

- Overview: concepts/overview.md

|

||||

- Inputs & Outputs: concepts/inputs-outputs.md

|

||||

|

||||

@@ -1,6 +1,6 @@

|

||||

[project]

|

||||

name = "uniface"

|

||||

version = "3.2.0"

|

||||

version = "3.4.0"

|

||||

description = "UniFace: A Comprehensive Library for Face Detection, Recognition, Tracking, Landmark Analysis, Face Parsing, Gaze Estimation, Age, and Gender Detection"

|

||||

readme = "README.md"

|

||||

license = "MIT"

|

||||

@@ -9,7 +9,7 @@ maintainers = [

|

||||

{ name = "Yakhyokhuja Valikhujaev", email = "yakhyo9696@gmail.com" },

|

||||

]

|

||||

|

||||

requires-python = ">=3.11,<3.15"

|

||||

requires-python = ">=3.10,<3.15"

|

||||

keywords = [

|

||||

"face-detection",

|

||||

"face-recognition",

|

||||

@@ -34,6 +34,7 @@ classifiers = [

|

||||

"Intended Audience :: Science/Research",

|

||||

"Operating System :: OS Independent",

|

||||

"Programming Language :: Python :: 3",

|

||||

"Programming Language :: Python :: 3.10",

|

||||

"Programming Language :: Python :: 3.11",

|

||||

"Programming Language :: Python :: 3.12",

|

||||

"Programming Language :: Python :: 3.13",

|

||||

@@ -44,7 +45,7 @@ dependencies = [

|

||||

"numpy>=1.21.0",

|

||||

"opencv-python>=4.5.0",

|

||||

"onnxruntime>=1.16.0",

|

||||

"scikit-image>=0.26.0",

|

||||

"scikit-image>=0.22.0",

|

||||

"scipy>=1.7.0",

|

||||

"requests>=2.28.0",

|

||||

"tqdm>=4.64.0",

|

||||

@@ -73,7 +74,7 @@ uniface = ["py.typed"]

|

||||

|

||||

[tool.ruff]

|

||||

line-length = 120

|

||||

target-version = "py311"

|

||||

target-version = "py310"

|

||||

exclude = [

|

||||

".git",

|

||||

".ruff_cache",

|

||||

|

||||

@@ -1,7 +1,7 @@

|

||||

numpy>=1.21.0

|

||||

opencv-python>=4.5.0

|

||||

onnxruntime>=1.16.0

|

||||

scikit-image>=0.26.0

|

||||

scikit-image>=0.22.0

|

||||

scipy>=1.7.0

|

||||

requests>=2.28.0

|

||||

tqdm>=4.64.0

|

||||

|

||||

@@ -91,6 +91,12 @@ def test_create_recognizer_sphereface():

|

||||

assert recognizer is not None, 'Failed to create SphereFace recognizer'

|

||||

|

||||

|

||||

def test_create_recognizer_edgeface():

|

||||

"""Test creating an EdgeFace recognizer using factory function."""

|

||||

recognizer = create_recognizer('edgeface')

|

||||

assert recognizer is not None, 'Failed to create EdgeFace recognizer'

|

||||

|

||||

|

||||

def test_create_recognizer_invalid_method():

|

||||

"""

|

||||

Test that invalid recognizer method raises an error.

|

||||

|

||||

@@ -8,7 +8,7 @@ from __future__ import annotations

|

||||

import numpy as np

|

||||

import pytest

|

||||

|

||||

from uniface.recognition import ArcFace, MobileFace, SphereFace

|

||||

from uniface.recognition import ArcFace, EdgeFace, MobileFace, SphereFace

|

||||

|

||||

|

||||

@pytest.fixture

|

||||

@@ -35,6 +35,12 @@ def sphereface_model():

|

||||

return SphereFace()

|

||||

|

||||

|

||||

@pytest.fixture

|

||||

def edgeface_model():

|

||||

"""Fixture to initialize the EdgeFace model for testing."""

|

||||

return EdgeFace()

|

||||

|

||||

|

||||

@pytest.fixture

|

||||

def mock_aligned_face():

|

||||

"""

|

||||

@@ -176,6 +182,45 @@ def test_sphereface_normalized_embedding(sphereface_model, mock_landmarks):

|

||||

assert np.isclose(norm, 1.0, atol=1e-5), f'Normalized embedding should have norm 1.0, got {norm}'

|

||||

|

||||

|

||||

# EdgeFace Tests

|

||||

def test_edgeface_initialization(edgeface_model):

|

||||

"""Test that the EdgeFace model initializes correctly."""

|

||||

assert edgeface_model is not None, 'EdgeFace model initialization failed.'

|

||||

|

||||

|

||||

def test_edgeface_embedding_shape(edgeface_model, mock_aligned_face):

|

||||

"""Test that EdgeFace produces embeddings with the correct shape."""

|

||||

embedding = edgeface_model.get_embedding(mock_aligned_face)

|

||||

|

||||

assert embedding.shape[1] == 512, f'Expected 512-dim embedding, got {embedding.shape[1]}'

|

||||

assert embedding.shape[0] == 1, 'Embedding should have batch dimension of 1'

|

||||

|

||||

|

||||

def test_edgeface_normalized_embedding(edgeface_model, mock_landmarks):

|

||||

"""Test that EdgeFace normalized embeddings have unit length."""

|

||||

mock_image = np.random.randint(0, 255, (640, 640, 3), dtype=np.uint8)

|

||||

|

||||

embedding = edgeface_model.get_normalized_embedding(mock_image, mock_landmarks)

|

||||

|

||||

assert embedding.shape == (512,), f'Expected shape (512,), got {embedding.shape}'

|

||||

norm = np.linalg.norm(embedding)

|

||||